ANSWER ENGINE OPTIMIZATION | Updated March 2026 | 11 min read

What You'll Learn in This Guide

- Why GEO for healthcare requires a different playbook than standard generative engine optimization

- How Google's Gemini, OpenAI's ChatGPT, and Perplexity each cite medical content differently

- The YMYL Trust Architecture that controls whether AI models quote your medical content

- Which healthcare schema types (MedicalOrganization, Physician, FAQPage) trigger AI citations

- How to structure clinical and patient-facing content so LLMs extract it verbatim

- A weekly GEO audit framework built for medical practices and health brands

- The co-citation strategy that positions your brand alongside Mayo Clinic and WebMD

GEO for healthcare is no longer optional. When a patient types "best cardiologist near me" into ChatGPT or asks Google's Gemini about treatment options for lower back pain, the AI either cites your practice or it cites your competitor. There is no page two in a generative search result. There is only cited or invisible.

This guide breaks down exactly how medical brands, hospitals, clinics, and health tech companies can implement generative engine optimization strategies built specifically for the healthcare vertical. The rules here are stricter than general GEO because healthcare content falls under YMYL (Your Money or Your Life), the highest trust tier in both Google's ranking systems and the training alignment of every major LLM. The strategies in this guide apply across ChatGPT, Perplexity, Gemini, Microsoft Copilot, and Anthropic's Claude.

DIRECT ANSWER: GEO for Healthcare

GEO for healthcare is the practice of optimizing medical content so AI search engines like ChatGPT, Google Gemini, and Perplexity cite and recommend your practice, hospital, or health brand in their responses. Because healthcare content is classified as YMYL, it faces stricter E-E-A-T requirements than any other vertical, meaning AI models will only cite sources that demonstrate verified medical expertise, authoritative third-party validation, and structured data that confirms clinical credibility.

1. Why GEO for Healthcare Plays by Different Rules

Standard GEO strategies work for most industries. Healthcare is not most industries.

Google categorizes all medical, health, and wellness content as YMYL. That classification carries through to every AI system trained on Google's index or aligned to its quality guidelines. When an LLM evaluates whether to cite a medical page, it applies a trust threshold that is measurably higher than what it uses for, say, a marketing blog or a product review.

Here is what that means in practice. A well-optimized blog post about "best project management tools" might earn a ChatGPT citation with solid on-page SEO and a few quality backlinks. A medical page about "symptoms of Type 2 diabetes" needs verified authorship from a credentialed provider, citations to peer-reviewed sources, schema markup confirming clinical authority, and consistent information across multiple independent platforms before any AI model will reference it.

GEO for healthcare demands that you build a trust architecture that satisfies both the AI model's retrieval system and the safety layer that filters medical recommendations. Without this foundation, your healthcare GEO efforts produce zero citations regardless of content quality.

KEY INSIGHT

Research from BrightEdge shows that 89% of healthcare-related queries now trigger Google AI Overviews, the highest trigger rate of any industry vertical. Medical brands that are not optimized for generative citation are invisible in nearly 9 out of 10 health searches.

2. How Each AI Platform Cites Medical Content in GEO for Healthcare

Not every AI engine pulls from the same sources, and the differences matter when you implement GEO for healthcare.

Google's Gemini favors brand-owned content. Approximately 52% of Gemini citations come from the brand's own website. For medical practices, that means your service pages, provider bios, and condition-specific landing pages carry the most weight. Gemini rewards structured, factual content on your domain, especially pages with MedicalOrganization schema and consistent subdomain architecture.

OpenAI's ChatGPT pulls nearly 49% of its citations from third-party sources. For healthcare, that includes Yelp, Healthgrades, Zocdoc, WebMD, and medical directories. If your practice has thin or inconsistent profiles across these platforms, ChatGPT will cite a competitor with stronger third-party presence instead. Because ChatGPT's web search runs on Bing's index, your Bing Webmaster Tools profile and IndexNow implementation directly affect how quickly your medical content becomes retrievable.

Perplexity sources narrowly and leans into industry-specific directories. In healthcare, Zocdoc and PubMed are disproportionately represented. Perplexity also weights recency heavily, so fresh clinical content and recently updated provider pages outperform static, older pages.

| Platform | Primary Source Type | Healthcare Citation Bias | Key Optimization |

|---|---|---|---|

| Gemini | Brand-owned website (52%) | Service pages, provider bios | MedicalOrganization schema, local landing pages |

| ChatGPT | Third-party sites (49%) | Healthgrades, Zocdoc, Yelp | Consistent directory profiles, IndexNow via Bing |

| Perplexity | Industry directories | Zocdoc, PubMed, NIH sources | Fresh content, clinical citations, recency signals |

| Copilot | Bing index + web | Medical journals, branded pages | Bing Webmaster submission, clear answer blocks |

| Claude | Web-sourced training data | Authority sites, Wikipedia, journals | Entity consistency, co-citation density |

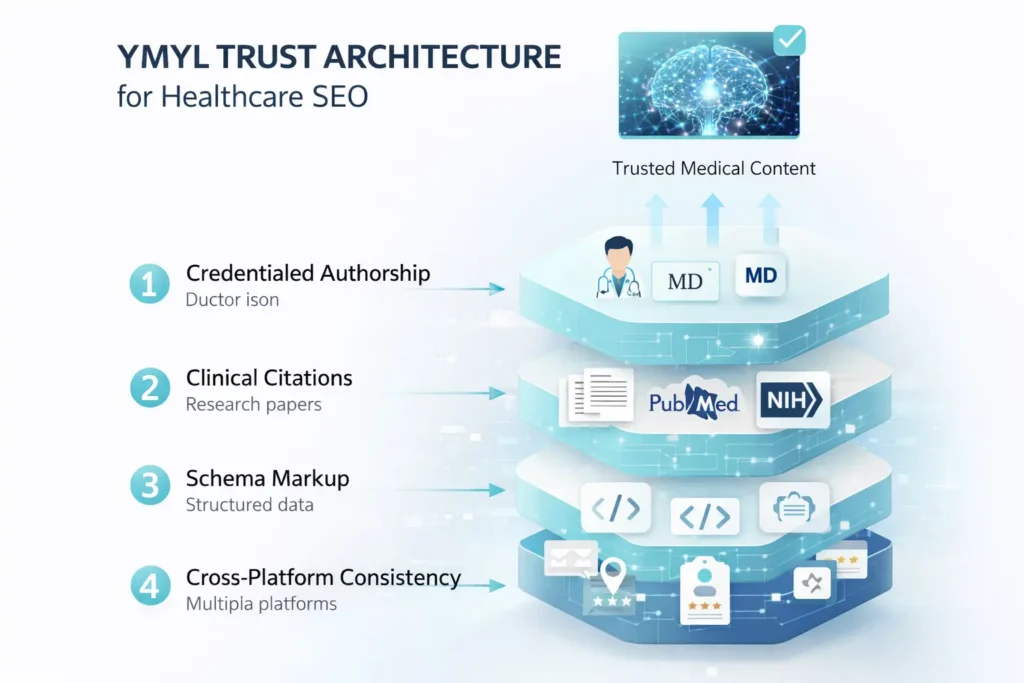

3. The YMYL Trust Architecture: The Core Framework for GEO for Healthcare

Every medical brand implementing GEO for healthcare needs to build what we call the YMYL Trust Architecture. This is a four-layer system that satisfies the trust requirements of AI models specifically for health content.

Layer 1: Credentialed Authorship. Every medical page must attribute content to a named, licensed healthcare provider. Include their NPI number, board certifications, and institutional affiliations. LLMs cross-reference author entities against known medical databases, and pages with anonymous or generic authorship ("written by our team") are filtered out of medical citations.

Layer 2: Clinical Citation Density. Link to PubMed studies, NIH resources, CDC guidelines, and peer-reviewed journals within your content. AI models trained on medical corpora weight pages that reference established clinical sources. This is not optional for healthcare GEO. It is a prerequisite.

Layer 3: Schema Confirmation. Use healthcare-specific structured data (MedicalOrganization, Physician, MedicalCondition, FAQPage) to confirm what your page is about and who stands behind it. Generic Article schema is not sufficient for medical content. The schema must explicitly declare clinical authority.

Layer 4: Cross-Platform Consistency. Your practice name, provider credentials, specialties, and contact information must be identical across your website, Google Business Profile, Healthgrades, Zocdoc, Yelp, and every directory where you appear. AI models use cross-platform consistency as a trust signal. Mismatched data creates doubt, and doubt means no citation.

CRITICAL RULE

Never publish medical content attributed to "Staff Writer" or "Admin." AI models trained on YMYL-aligned safety guidelines actively filter unattributed health content from citation pools. Every medical page needs a named, credentialed author with verifiable clinical qualifications.

4. The GEO for Healthcare Schema Stack That Triggers AI Citations

The schema markup for GEO for healthcare goes beyond standard BlogPosting or Article types. You need a layered schema stack that tells AI systems exactly what your medical entity is, who your providers are, and what conditions or services you cover.

Required schema types for medical practices:

| Schema Type | Best Used For | AI Citation Benefit | Critical Properties |

|---|---|---|---|

| MedicalOrganization | Practice/hospital entity pages | Confirms medical authority to LLMs | name, medicalSpecialty, address, telephone |

| Physician | Individual provider bio pages | Links provider credentials to content | name, medicalSpecialty, qualifications, hospitalAffiliation |

| MedicalCondition | Condition/treatment pages | Maps content to specific health queries | name, signOrSymptom, possibleTreatment, riskFactor |

| FAQPage | Patient FAQ and Q&A pages | Directly extractable by AI answer engines | Question/Answer pairs matching page content |

| MedicalClinic | Clinic-specific pages | Combines medical and business signals | openingHours, availableService, medicalSpecialty |

CRITICAL RULE

Do not use generic schema templates for medical pages. Every schema block must be populated with actual provider names, real NPI numbers, verified specialties, and accurate service details. AI models can detect template schema that does not match page content, and mismatched schema hurts trust scoring.

5. Content Structure for GEO for Healthcare That LLMs Actually Extract

AI models do not read medical content the way a patient does. They scan for extractable answer blocks, structured clinical information, and clear question-answer patterns. Your content structure determines whether your page gets cited or gets skipped.

Open with the direct answer. The first 40 to 60 words below every H2 heading should answer the heading question completely. AI search systems pull answers from content that directly and immediately addresses the query. Burying the answer three paragraphs deep means the LLM moves on to a competitor who answers faster.

Use H2 headings as patient questions. Instead of "Our Cardiology Services," write "What conditions does a cardiologist treat?" Match the exact phrasing patients use when asking AI assistants about health topics.

Include clinical specifics. This is where GEO for healthcare separates from generic optimization. Vague phrases like "we offer comprehensive care" tell an AI nothing extractable. Instead, write "Our board-certified cardiologists treat atrial fibrillation, coronary artery disease, heart failure, and hypertension using evidence-based protocols aligned with AHA 2026 guidelines." That sentence can be quoted. The vague version cannot.

6. Co-Citation Strategy: The Hidden Driver of GEO for Healthcare

Co-citation is the single most overlooked element in GEO for healthcare. When your brand appears alongside trusted medical entities (Mayo Clinic, Cleveland Clinic, Johns Hopkins, NIH) across multiple independent sources, AI models begin to associate your brand with the same authority tier.

Build co-citation through guest contributions to medical journals and health publications, by earning press coverage alongside established health systems, and by maintaining active profiles on platforms where top medical institutions also appear. Your E-E-A-T signals compound when AI models observe your brand in the same contexts as recognized authorities.

This is not about claiming equivalence to Mayo Clinic. Effective GEO for healthcare co-citation means making sure that when an AI model processes information about your specialty, your practice appears in enough overlapping source contexts that the model assigns meaningful entity weight to your brand.

7. Technical GEO for Healthcare: robots.txt and IndexNow Setup

Your technical SEO configuration determines whether AI crawlers can even access your medical content. No GEO for healthcare strategy works if bots are blocked at the door. Many healthcare websites block AI bots by default through restrictive robots.txt rules or CDN-level bot filtering. If OAI-SearchBot, PerplexityBot, or ClaudeBot cannot crawl your pages, no amount of content optimization will earn citations.

Add these directives to your robots.txt:

User-agent: OAI-SearchBot

Allow: /

User-agent: ChatGPT-User

Allow: /

User-agent: PerplexityBot

Allow: /

User-agent: Google-Extended

Allow: /

User-agent: ClaudeBot

Allow: /

User-agent: anthropic-ai

Allow: /Because ChatGPT's live web browsing is powered by Bing's index, you cannot wait for passive crawling. Implement the IndexNow protocol to ping Bing the moment you publish or update a page. This makes your content immediately retrievable for ChatGPT search queries. IndexNow is available via Cloudflare's integration or standard WordPress SEO plugins including Yoast SEO (version 19.0+) and Rank Math.

8. Weekly GEO for Healthcare Audit Framework

GEO for healthcare is not a one-time optimization. AI models update their sources continuously, and competitor medical practices are actively optimizing for the same patient queries. Run this audit weekly:

- Prompt test across platforms. Type 5 to 10 patient queries into ChatGPT, Gemini, Perplexity, and Copilot. Record whether your practice is cited, which competitor appears, and what source the citation comes from.

- Check directory consistency. Verify that your practice name, specialties, provider names, and contact details match across Healthgrades, Zocdoc, Yelp, Google Business Profile, and your website.

- Review content freshness. Any medical page not updated in 90 days loses recency weight. Update dateModified schema and refresh clinical references quarterly.

- Audit schema validity. Run your key pages through Google's Rich Results Test. Confirm MedicalOrganization, Physician, and FAQPage schema are error-free and match actual page content.

- Track AI search visibility metrics. Log citation counts by platform, track brand mentions in AI responses, and compare share of voice against the top three competitors in your specialty.

9. Common GEO for Healthcare Mistakes

| Mistake | Why It Hurts | Fix |

|---|---|---|

| No credentialed author on medical pages | AI models filter unattributed YMYL content from citation pools | Add named physician author with NPI, certifications, and institutional affiliation |

| Generic "About Us" schema only | LLMs cannot verify medical authority without healthcare-specific structured data | Implement MedicalOrganization + Physician schema with actual provider details |

| Blocking AI bots in robots.txt | OAI-SearchBot, PerplexityBot, and ClaudeBot cannot crawl pages they are blocked from | Explicitly allow all AI user agents in robots.txt |

| Inconsistent directory profiles | Cross-platform mismatches reduce trust scoring in AI retrieval systems | Audit Healthgrades, Zocdoc, Yelp, and GBP monthly for NAP and specialty consistency |

| Vague clinical language | "Comprehensive care" and "holistic approach" give AI nothing extractable to cite | Replace with specific conditions, procedures, evidence-based protocols, and outcomes data |

| No direct answer blocks | LLMs skip pages that bury answers below multiple introductory paragraphs | Answer every H2 question in the first 40 to 60 words below the heading |

| Passive Bing crawling | ChatGPT cannot cite pages that are not yet in Bing's index | Implement IndexNow protocol for instant Bing indexation on publish |

| No information gain | Generic content identical to every other medical website gets passed over | Include original clinical frameworks, provider-specific protocols, or outcome data |

Article Summary

- GEO for healthcare requires a specialized approach because all medical content falls under YMYL, the highest trust classification for AI models. Standard GEO tactics alone are not sufficient.

- 89% of healthcare queries now trigger Google AI Overviews, making generative citation the primary visibility channel for medical brands.

- Each AI platform cites differently: Gemini favors brand-owned sites (52%), ChatGPT leans on third-party directories (49%), and Perplexity weights Zocdoc and PubMed.

- The YMYL Trust Architecture has four layers: credentialed authorship, clinical citation density, healthcare schema confirmation, and cross-platform consistency.

- Healthcare schema must include MedicalOrganization, Physician, MedicalCondition, and FAQPage types populated with real provider data.

- Content must open with direct answers in the first 40 to 60 words below each heading to match LLM extraction patterns.

- Co-citation alongside established medical authorities (Mayo Clinic, Cleveland Clinic, NIH) compounds your brand's entity weight in AI models.

- AI crawlers (OAI-SearchBot, PerplexityBot, ClaudeBot) must be explicitly allowed in robots.txt or your medical content stays invisible.

- IndexNow implementation is mandatory for GEO for healthcare because ChatGPT search runs on Bing's index and passive crawling is too slow for healthcare content updates.

- Weekly GEO for healthcare audits covering prompt testing, directory consistency, content freshness, schema validation, and AI visibility tracking keep your practice competitive in generative search results.

Frequently Asked Questions

What is GEO for healthcare and why does it matter for medical brands?

GEO for healthcare is the practice of optimizing medical content so AI search engines cite and recommend your practice in their responses. It differs from regular SEO because healthcare content is classified as YMYL, which means AI models apply stricter trust requirements before citing medical sources. You need credentialed authorship, clinical references, healthcare-specific schema markup, and cross-platform consistency that general SEO does not require.

Which AI platforms are most important for medical practices to optimize for?

Google's Gemini, OpenAI's ChatGPT, and Perplexity are the three highest-priority platforms for healthcare GEO. Gemini triggers AI Overviews on 89% of health queries and favors brand-owned content. ChatGPT pulls heavily from Bing-indexed third-party directories like Healthgrades and Zocdoc. Perplexity weights clinical sources and medical directories. Microsoft Copilot and Anthropic's Claude are growing in healthcare query volume and should also be included in your optimization strategy.

What schema markup should medical practices use for AI search optimization?

Medical practices should implement a layered schema stack: MedicalOrganization for the practice entity, Physician for individual provider pages, MedicalCondition for condition and treatment pages, MedicalClinic for location-specific pages, and FAQPage for any patient Q&A content. Every schema block must use real provider data, actual NPI numbers, and verified specialties. Generic templates with placeholder data hurt rather than help.

How do AI models decide which medical sources to cite?

AI models evaluate medical sources based on E-E-A-T signals amplified for YMYL content. They check for named physician authorship with verifiable credentials, citations to peer-reviewed clinical sources, healthcare-specific structured data, and consistent brand information across multiple independent platforms. Pages that meet all four criteria enter the citation pool. Pages missing any single element are filtered out for health-related queries.

How often should medical practices audit their GEO for healthcare performance?

Medical practices should run a GEO for healthcare audit weekly. This includes prompt-testing 5 to 10 patient queries across ChatGPT, Gemini, Perplexity, and Copilot, checking directory consistency on Healthgrades, Zocdoc, and Google Business Profile, refreshing any medical content older than 90 days, validating schema markup, and tracking AI citation counts by platform against competitors.