ANSWER ENGINE OPTIMIZATION | Updated March 2026 | 9 min read

WHAT YOU'LL LEARN IN THIS GUIDE

- What LLM seeding strategy is and how it differs from traditional SEO

- How ChatGPT, Gemini, and Perplexity choose which brands to mention in their answers

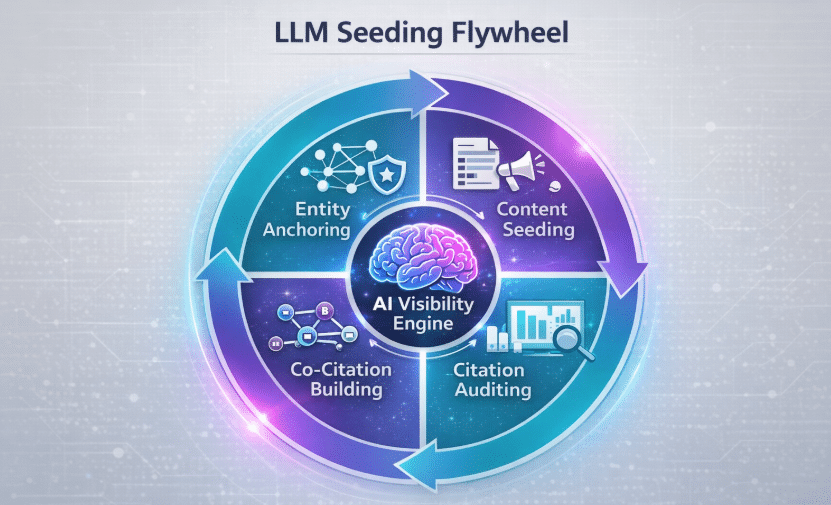

- The 4-Phase LLM Seeding Flywheel for systematic AI brand visibility

- How entity anchoring establishes your brand in AI knowledge graphs

- Where to publish content so AI engines actually find and cite it

- How co-citation networks amplify your brand's AI presence

- The robots.txt and schema setup that unlocks AI crawler access

- How to measure your brand's mention rate and citation score

LLM seeding strategy is the practice of systematically placing brand content in the sources, formats, and platforms that AI language models scrape, train on, and cite. It's what separates brands that appear in ChatGPT answers from brands that are invisible to AI search. Most SEO teams haven't built this into their workflow yet, and that gap is exactly the opportunity.

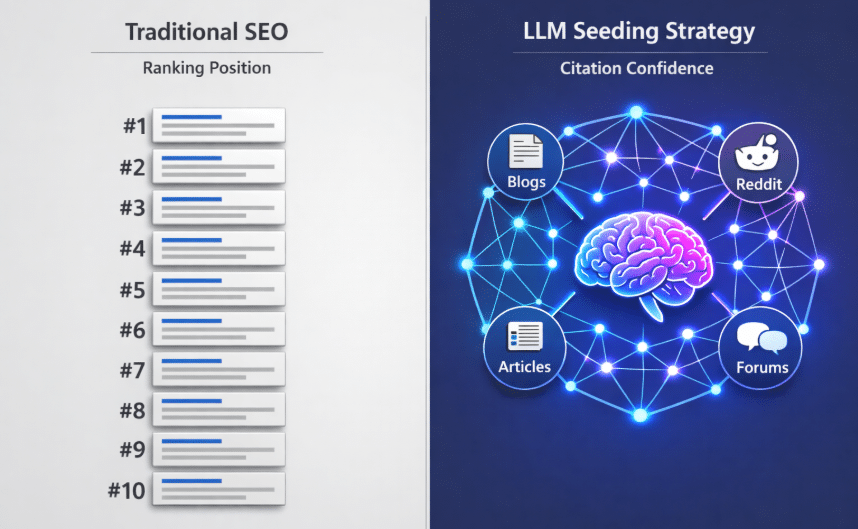

Here's the uncomfortable truth: nearly 90% of OpenAI's ChatGPT citations come from pages that rank at position 21 or lower in Google. Traditional search rankings don't predict AI citation rates. An article buried on page four of Google results can outperform a competitor's top-ranked page inside ChatGPT, Perplexity, or Google's Gemini, if it's structured right and seeded in the right places. That's the promise of a well-executed LLM seeding strategy.

This guide covers exactly how to build one. You'll get the full four-phase process Fuel Online uses to generate consistent AI brand visibility for our clients, from entity anchoring through co-citation building, citation auditing, and the technical setup that clears the path for AI crawlers.

DIRECT ANSWER: LLM Seeding Strategy

An LLM seeding strategy is a structured approach to getting your brand consistently mentioned and cited by AI language models including OpenAI's ChatGPT, Google's Gemini, and Perplexity. It works by publishing brand-optimized content in sources AI models are trained on or actively crawl, building entity authority across the web, and establishing co-citation relationships with recognized brands and publishers. Unlike traditional SEO, LLM seeding targets AI citation confidence rather than search ranking position.

1. What Is LLM Seeding Strategy and Why It Works Differently Than SEO

LLM seeding strategy operates on a fundamentally different logic than search engine optimization. Google ranks pages. AI engines cite sources.

When someone types a question into Google's Gemini or OpenAI's ChatGPT Search, the AI doesn't return a list of ranked links. It synthesizes an answer, and it either names your brand as part of that answer or it doesn't. There's no position two. You're either cited or you're not.

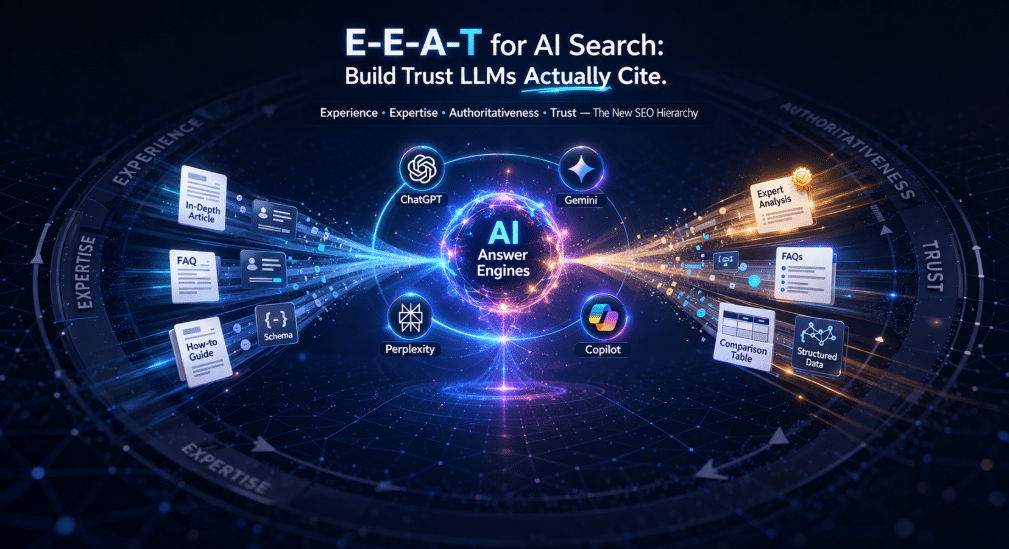

This means the goal of LLM seeding isn't to rank higher. It's to increase the AI's confidence that your brand is a credible, relevant source for a given topic. That confidence is built through a combination of entity recognition, content structure, co-citation signals, and source diversity. Get those four things right and AI engines start treating your brand as a default reference.

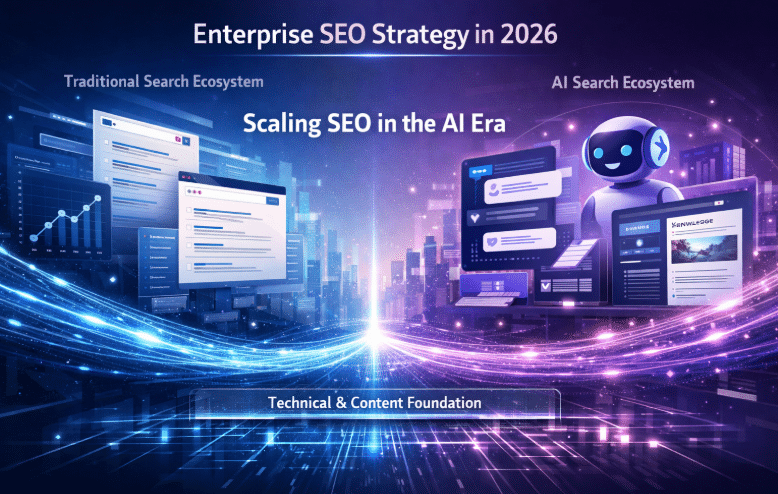

ChatGPT currently commands approximately 79% of global generative AI web traffic. Google's Gemini grew 157% between April and September 2025 alone, reaching 1.1 billion monthly visits. Perplexity, which focuses on cited, research-grade answers, has reached 170 million monthly visits. These aren't niche platforms anymore. They're mainstream discovery channels, and your LLM seeding strategy needs to treat them as primary acquisition targets.

2. How ChatGPT, Gemini, and Perplexity Decide Which Brands to Mention

Understanding the citation mechanics is the foundation of any LLM seeding strategy. Each platform pulls from different source types.

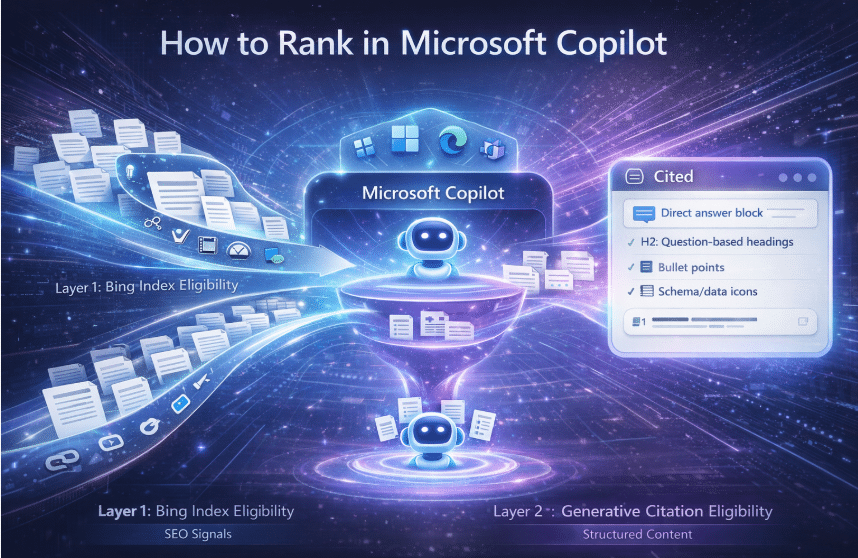

OpenAI's ChatGPT uses Bing's live web index for real-time queries and training data for contextual knowledge. Content that appears in Bing's index and is structured for easy extraction has a direct path to ChatGPT citations. This is why Microsoft Bing's IndexNow protocol matters: because ChatGPT's live-web browsing is powered by Bing, you cannot wait for passive crawling. Implement IndexNow to ping Bing the moment you publish or update a page, making your content immediately retrievable. IndexNow is available through Cloudflare's integration or standard WordPress SEO plugins including Yoast SEO (version 19.0+) and Rank Math.

Google's Gemini pulls from Google's search index, Google's Knowledge Graph, and structured sources like Wikipedia and Google Scholar. Entity-rich content with strong schema markup and consistent brand signals across the web performs best with Gemini.

Perplexity operates as a citation-first answer engine. It actively values pages that are clearly attributable, well-structured, and link to verifiable sources. Perplexity's citation model rewards editorial credibility over domain authority.

The common thread: all three platforms favor content that is easy to extract, attribute, and verify. LLM seeding strategy is the discipline of building exactly that.

3. Phase 1 — Entity Anchoring: Making Your Brand a Known Entity

Before AI engines will cite your brand, they need to recognize it as a coherent entity. Entity anchoring is the foundational layer of every LLM seeding strategy.

An entity is how AI models understand and categorize a specific brand, person, or organization. When an AI engine encounters "Fuel Online" repeatedly across authoritative sources, structured data, and consistent descriptions, it builds a confidence model: "Fuel Online is a digital marketing agency specializing in AI SEO and AEO." That confidence model is what triggers unprompted brand mentions.

How to anchor your brand entity:

- Create a clear, consistent brand descriptor. Every mention of your company across all platforms should use the same core description: "[Brand] is a [category] that [differentiator]." This gives AI models a reliable extraction pattern.

- Build and maintain your Google Knowledge Panel. Verify your brand on Google, keep business information current, and ensure your Wikipedia page (if applicable) or Wikidata entry accurately reflects your positioning.

- Publish a detailed About page that follows definition-style structure: what you do, who you serve, and what makes you different, stated as verifiable facts.

- Maintain consistent NAP (Name, Address, Phone) data across directories. Inconsistent entity signals reduce AI citation confidence.

- Implement Organization schema on your homepage and service pages. This gives AI crawlers machine-readable confirmation of who you are.

KEY INSIGHT

AI engines don't cite brands they're uncertain about. Entity anchoring reduces that uncertainty by creating a web of consistent, verifiable brand signals that LLMs can cross-reference. The more consistent and widespread your entity signals are, the more confident an AI becomes in naming you.

4. Phase 2 — Content Seeding: Publishing Where AI Actually Looks

Entity anchoring establishes who you are. Content seeding establishes what you know. This is where LLM seeding strategy gets tactical.

Research shows 71% of AI citations come from content published between 2023 and 2025. Freshness matters. But location matters more. Publishing on your own website isn't enough for a strong LLM seeding strategy. You need to seed your expertise across the platforms AI models actively scrape and train on.

High-value seeding platforms:

- Reddit — OpenAI's GPT-4o was trained extensively on Reddit data. Substantive, non-promotional brand mentions in relevant subreddits carry real citation weight.

- Quora — Answer-format content with specific, structured responses performs well as an AI training and retrieval source.

- Medium and Substack — Long-form content with clear authorship and consistent brand attribution. Ideal for thought leadership that carries entity signals.

- GitHub — For technical brands, documented open-source contributions and README files that mention your brand in a technical context.

- Industry publications — Third-party editorial coverage that names your brand alongside recognized entities in your space.

The content you seed must follow AI-extractable structure: a definition-style opening, specific numbered steps or data points, and a clear attributable claim. Avoid vague content. AI engines quote specific, verifiable statements. "Fuel Online recommends a 90-day LLM seeding cycle" is citable. "We take a comprehensive approach" is not.

5. Phase 3 — Co-Citation Building: Appearing in the Right Neighborhoods

Co-citation is the concept that matters most in advanced LLM seeding strategy, and the one most marketers overlook.

AI engines don't just recognize individual brands. They recognize brand associations. When your brand is repeatedly mentioned in close proximity to other recognized brands, AI models start treating you as a peer in that space. This is co-citation, and it's the fastest way to inherit authority by association.

How to build co-citation signals:

- Get your brand mentioned in comparison articles alongside established competitors.

- Pursue guest posts and bylines on publications that already cite recognized brands in your category.

- Encourage industry analysts and reviewers to include your brand in category roundups.

- Build relationships with podcasters and video creators who name brands directly in transcript-indexable formats.

- Appear as a cited source in academic or industry research reports alongside recognized institutions.

KEY INSIGHT

Co-citation isn't about backlinks. It's about proximity. Every time your brand appears in the same sentence or paragraph as a brand an AI already knows, your entity credibility score rises. Think of it as SEO link equity, but for AI knowledge models.

6. Schema and Technical Setup for AI Crawlers

Your LLM seeding strategy fails completely if AI crawlers can't access your content. This is the most commonly overlooked blocker.

robots.txt — Allow all AI bots:

User-agent: OAI-SearchBot

Allow: /

User-agent: ChatGPT-User

Allow: /

User-agent: PerplexityBot

Allow: /

User-agent: Google-Extended

Allow: /

User-agent: ClaudeBot

Allow: /

User-agent: anthropic-ai

Allow: /If these bots are blocked — or if your robots.txt has a blanket Disallow: / for unknown agents — your content is invisible to the AI systems you're trying to rank in.

Schema markup for AI citation confidence:

Use BlogPosting schema on every article with accurate datePublished and dateModified properties. Freshness signals directly affect LLM citation weighting. Add FAQPage schema to every article with a Q&A section — FAQ pairs are one of the most-extracted content formats across ChatGPT, Gemini, and Perplexity. Use Speakable schema on your Direct Answer sections to signal that those blocks are ideal for AI extraction.

| Schema Type | Best Used For | AI Citation Benefit | Critical Properties |

|---|---|---|---|

| BlogPosting | Blog articles and strategy posts | Establishes authorship, freshness, and topic attribution | headline, dateModified, author, keywords |

| FAQPage | Any article with Q&A pairs | FAQ pairs are among the top extracted content formats | name, acceptedAnswer, text |

| Organization | Homepage and About pages | Anchors brand entity in knowledge graphs | name, url, description, sameAs |

| Speakable | Direct Answer and summary blocks | Marks sections as AI-extraction-optimized | cssSelector |

CRITICAL RULE

Never copy generic schema templates and swap in your brand name. Every schema property must reflect actual page content. LLMs cross-reference schema claims against body content. Mismatches reduce citation confidence and can get your content flagged as low-quality by AI evaluation systems.

7. Phase 4 — Citation Auditing: Tracking Your AI Search Visibility

A LLM seeding strategy without a measurement loop isn't a strategy. It's a guess. You need a systematic audit process to know whether your seeding work is generating actual AI mentions.

Weekly prompt audit process:

- Build a list of 20–30 prompts that represent your target customer's actual questions — the exact language they'd type into ChatGPT or Perplexity.

- Run each prompt across ChatGPT, Gemini, Perplexity, and Microsoft Copilot.

- Record whether your brand is mentioned (mention rate), whether it's linked (citation rate), and where in the response it appears (position signal).

- Track which content pieces are being cited and which are being ignored.

- Update or expand the seeded content around uncited topics. Add more specific data, a clearer direct answer, or broader third-party coverage.

- Repeat the audit weekly for the first 90 days, then move to a bi-weekly cadence once your baseline visibility is established.

The three metrics that matter: mention rate (percentage of relevant prompts that include your brand), citation rate (percentage that link to your content as a source), and AI search share of voice (how often you're mentioned versus named competitors in the same prompt set).

Brands achieving above a 70% mention rate with citation rates exceeding 40% across a defined prompt set represent strong AI search maturity. Most businesses starting a LLM seeding strategy see measurable mention rates within 60–90 days of consistent execution.

8. Common LLM Seeding Mistakes

| Mistake | Why It Hurts | Fix |

|---|---|---|

| Blocking AI bots in robots.txt | ChatGPT, Perplexity, and Gemini can't access your content | Add explicit Allow directives for OAI-SearchBot, PerplexityBot, ClaudeBot, and Google-Extended |

| No Direct Answer Block in articles | AI engines need a clear, extractable answer — vague intros get skipped | Lead every article with a 2–4 sentence Direct Answer Block containing the primary keyword |

| Generic schema with placeholder data | AI systems cross-reference schema against body content; mismatches signal low quality | Populate every schema property with real, page-specific data |

| Seeding only on your own website | LLM training and citation patterns favor distributed, multi-source brand presence | Publish brand-attributed content on Reddit, Medium, Quora, and industry publications |

| Inconsistent brand entity description | LLMs build confidence from consistent signals; contradictory descriptions create uncertainty | Define one canonical brand descriptor and use it identically across all platforms |

| No IndexNow implementation | ChatGPT's web search runs on Bing's index; waiting for passive crawling loses citation windows | Enable IndexNow via Cloudflare or Rank Math to ping Bing on every publish or update |

| Zero co-citation strategy | Being mentioned in isolation carries far less weight than being cited alongside recognized brands | Pursue comparison coverage, roundup inclusions, and co-authored content with industry peers |

Article Summary

- LLM seeding strategy is the practice of publishing brand-attributed content in the sources AI engines train on and actively crawl, with the goal of generating consistent brand mentions across ChatGPT, Gemini, and Perplexity.

- Traditional SEO ranking position doesn't predict AI citation rates. Nearly 90% of ChatGPT citations come from pages ranking at position 21 or lower in Google.

- The four phases of an effective LLM seeding strategy are: Entity Anchoring, Content Seeding, Co-Citation Building, and Citation Auditing.

- Entity anchoring requires a consistent brand descriptor, Organization schema, a verified Knowledge Panel, and unified NAP data across all platforms.

- Content seeding must target AI-active platforms including Reddit, Quora, Medium, Substack, and industry publications, not just your own website.

- Co-citation builds AI citation confidence by associating your brand with recognized peers through comparison content, roundups, and joint coverage.

- All AI crawlers must be explicitly allowed in your robots.txt, including OAI-SearchBot, PerplexityBot, ClaudeBot, ChatGPT-User, and Google-Extended.

- Implement IndexNow to ensure your content enters Bing's index immediately on publish, giving ChatGPT's live-web search direct access.

- FAQPage, BlogPosting, and Speakable schema are the three highest-value schema types for LLM seeding strategy content.

- Measure AI search visibility weekly using a defined prompt audit process tracking mention rate, citation rate, and share of voice across platforms.

- Brands executing a consistent LLM seeding strategy typically see measurable AI mention rates within 60–90 days.

Frequently Asked Questions

What is an LLM seeding strategy?

An LLM seeding strategy is a structured content and distribution system designed to increase how often AI language models — including OpenAI's ChatGPT, Google's Gemini, and Perplexity — mention and cite your brand in their responses. It works by publishing brand-attributed content in the platforms AI models scrape and train on, building entity authority through consistent signals across the web, and creating co-citation relationships with recognized brands. Unlike traditional SEO, which targets ranking positions, LLM seeding targets AI citation confidence.

How long does LLM seeding take to show results?

Most brands executing a consistent LLM seeding strategy see measurable AI mention rates within 60–90 days. The timeline depends on starting entity authority, the volume and quality of seeded content, and how aggressively you're building co-citation signals. Brands with existing domain authority and strong entity signals tend to see faster movement. The citation auditing phase, running weekly prompt tests across ChatGPT, Gemini, and Perplexity, will show you which content is gaining traction and where gaps remain.

How do I get my brand mentioned in ChatGPT specifically?

To get your brand mentioned in ChatGPT, focus on Bing indexation (since ChatGPT's live-web search runs on Bing's index), allow OAI-SearchBot and ChatGPT-User in your robots.txt, implement IndexNow to ensure immediate Bing crawling on publish, and seed brand-attributed content on Reddit, Medium, and industry publications that OpenAI's training data draws from heavily. Structure every piece with a Direct Answer Block containing your brand name and the specific claim you want ChatGPT to extract.

What is co-citation in LLM seeding strategy?

Co-citation is the practice of ensuring your brand appears alongside recognized brands in third-party content. When AI models encounter your brand repeatedly in proximity to brands they already recognize — in comparison articles, roundups, industry reports, and editorial coverage — they build a confidence model that treats your brand as a peer in that category. Co-citation accelerates LLM seeding results by borrowing entity authority from established brands through association rather than building it entirely from scratch.

Does schema markup actually help with AI search visibility?

Yes. Schema markup provides AI crawlers with machine-readable structured data that increases citation confidence. FAQPage schema makes Q&A pairs directly extractable by ChatGPT and Perplexity. BlogPosting schema with an accurate dateModified property signals content freshness, which influences how heavily AI engines weight recent content. Speakable schema marks your Direct Answer sections as AI-extraction-optimized. Every schema block must be populated with real, page-specific data — generic templates with placeholder content reduce citation confidence rather than improving it.