ANSWER ENGINE OPTIMIZATION | Updated March 2026 | 11 min read

- What E-E-A-T for AI search means and how it differs from Google's original framework

- The 3-layer trust hierarchy LLMs use to evaluate whether to cite your content

- How to build author entity signals that ChatGPT, Gemini, and Perplexity recognize

- The exact Person schema properties that raise your LLM citation rate

- Why co-citation is the most underused trust signal in AI search

- A step-by-step E-E-A-T audit checklist for AI search visibility

- The common E-E-A-T mistakes that get sites blacklisted from AI-generated answers

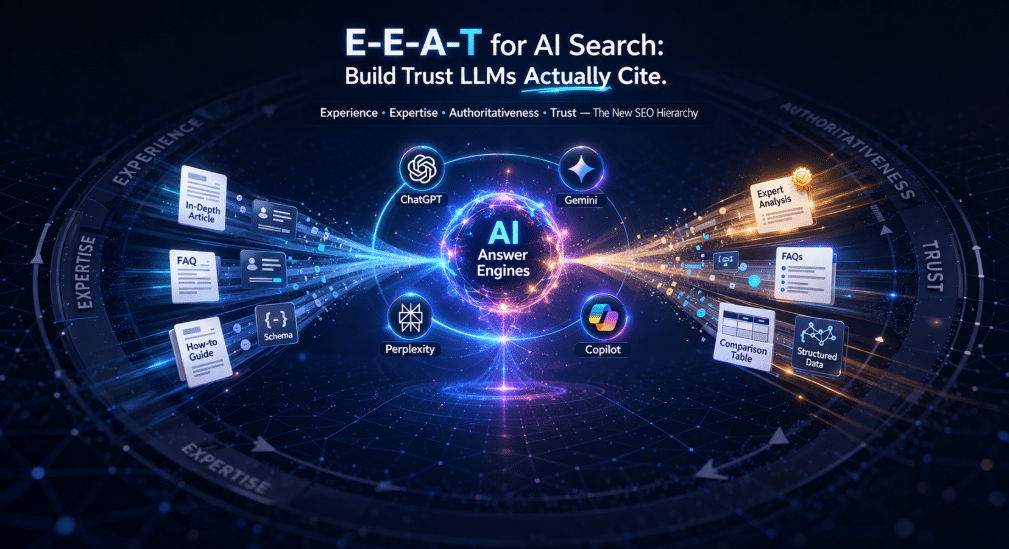

E-E-A-T for AI search is no longer a Google-specific concept. When ChatGPT, Gemini, Perplexity, and Anthropic's Claude generate an answer, they run your content through a trust evaluation process that maps almost directly to Google's Experience, Expertise, Authoritativeness, and Trustworthiness framework. The key difference: LLMs don't just rank you. They decide whether to cite you at all.

Research shows 96% of content appearing in AI Overviews comes from sources with verified E-E-A-T signals. Sites that update within two months earn 28% more AI citations than older content. Content with real statistics and named author credentials achieves 30-40% higher visibility in AI-generated responses. This guide breaks down exactly how to build E-E-A-T for AI search so your content gets extracted, not ignored.

1. Why E-E-A-T for AI Search Works Differently Than for Google

Google's original E-E-A-T evaluation happens at crawl time and at ranking time. An algorithm processes your page, looks at signals, and assigns a quality score. LLMs work differently. When OpenAI's ChatGPT or Google's Gemini answers a question, it cross-references training data, live web results, and entity databases simultaneously before deciding what to cite.

This means E-E-A-T for AI search is not a page-level signal. It's a brand-level and author-level signal. If your business entity isn't clearly defined in knowledge graphs, if your authors don't have verifiable credentials, or if your brand information conflicts across platforms, AI systems will route around you, even if your content is excellent.

The practical implication: you can rank on page one of Google and still get zero citations in AI-generated answers. E-E-A-T for AI search requires a separate optimization track.

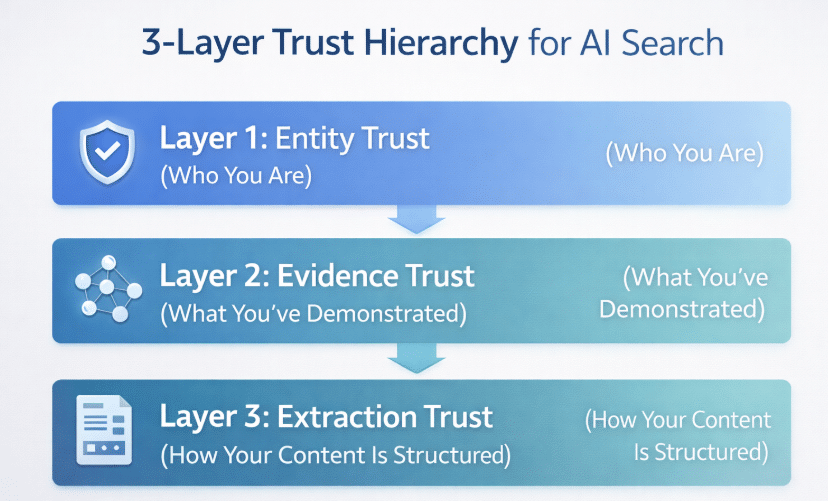

2. The 3-Layer Trust Hierarchy LLMs Use to Evaluate Your Content

Understanding E-E-A-T for AI search starts with understanding how LLMs actually evaluate sources. There are three distinct layers they work through:

Layer 1: Entity Trust (Who You Are)

Before LLMs even evaluate your content, they check whether your brand or author exists as a verified entity. Google's Knowledge Graph, Wikidata, and Wikipedia serve as truth anchors. When an AI system is asked about a topic, it first checks these databases for recognized entity IDs. Brands with Knowledge Panels are treated as hard facts. Brands without them are treated as unverified claims.

Entity trust is binary in AI search: you're either a recognized entity or you're not. There's no partial credit.

Layer 2: Evidence Trust (What You've Demonstrated)

Once your entity is recognized, LLMs evaluate the evidence around it. This includes backlinks from industry publications, brand mentions across the web, citations in professional contexts, and co-citation patterns. A brand consistently cited alongside recognized authorities in a topic area inherits credibility in AI training data.

Layer 3: Extraction Trust (How Your Content Is Structured)

The final layer determines whether an LLM can physically extract your content as a citable answer. This is where structured data, direct answer blocks, and FAQ schema come in. Even a highly authoritative source gets passed over if the content isn't structured in a way that allows clean extraction.

3. Building Entity Trust: Structured Data and Knowledge Graph Signals

The most direct way to establish entity trust for E-E-A-T in AI search is through

. These structured data types signal to AI systems who you are, what you do, and how to cross-reference your claims.

Organization Schema for Brand Entity

Your Organization schema should include the sameAs property pointing to your Wikidata entry, LinkedIn page, Crunchbase profile, and any industry directory listings. These external references allow LLMs to triangulate your identity across sources. A schema implementation without sameAs links establishes your organization in isolation, which reduces its entity authority.

Person Schema for Author Authority

Named authorship with verifiable credentials is one of the highest-leverage E-E-A-T signals in AI search. The same article published under an anonymous byline versus a named expert with industry credentials produces measurably different citation rates in AI-generated answers.

Your Person schema should include:

| Property | Purpose | Example Value |

|---|---|---|

name |

Author identity anchor | "Scott Levy" |

jobTitle |

Expertise claim | "Digital Marketing Strategist" |

alumniOf |

Credential verification | University or certification |

knowsAbout |

Topic authority signal | ["AEO", "GEO", "AI SEO"] |

sameAs |

Entity cross-reference | LinkedIn, Twitter/X, Wikidata |

url |

Entity home | Author bio page URL |

Only 31.3% of websites implement any schema markup at all. Fewer still implement author Person schema with knowsAbout and sameAs properties. This creates a clear competitive gap for early adopters of E-E-A-T for AI search.

4. Building Evidence Trust: Co-Citation and Brand Mentions

Co-citation is the most underused trust signal in E-E-A-T for AI search. When your brand is mentioned alongside recognized authorities in your field, AI training models absorb that association. Over time, your entity becomes conceptually linked to that topical cluster in LLM training data.

How Co-Citation Works in Practice

If Search Engine Journal, Moz, and Search Engine Land all cite your brand in the same article about AEO strategy, every LLM trained on that content absorbs the association. Your brand becomes part of the "trusted voices in AEO" cluster in the model's weights. When a user later asks ChatGPT or Gemini about AEO, your brand is more likely to surface.

This is why the strategy of getting mentioned in established industry publications goes beyond traditional link building. You're not just chasing PageRank. You're seeding your entity into AI training data alongside brands the model already trusts.

Practical Co-Citation Tactics:

- Write guest content for established industry publications in your niche

- Get quoted in journalist roundups and expert Q&A pieces

- Participate in podcast interviews that get transcribed and indexed

- Publish original research or data that industry publications cite

- Issue structured press releases to newswires with schema markup

[IMAGE SUGGESTION 2: A visual showing co-citation network: FuelOnline at center, connecting to industry publications like Search Engine Journal, Moz, and Neil Patel Blog, with an LLM model absorbing those relationship patterns.]

5. Building Extraction Trust: How to Structure Content for LLM Extraction

The third layer of E-E-A-T for AI search is about formatting your content so LLMs can physically extract it as an answer. Even with strong entity and evidence trust, poorly structured content gets passed over.

The Direct Answer Block

Every page you want AI systems to cite needs a direct answer block in the first 200 words. This is a clearly delineated 2-4 sentence answer to the page's primary question. Structure it as a visually distinct box or paragraph that could stand alone as a complete answer. This is the #1 element that LLMs extract.

FAQ Sections with FAQPage Schema

FAQPage schema maps your Q&A section directly to structured data that LLMs parse. Each question should match an actual search query your audience types. Each answer should be 3-6 sentences, factual, and complete without requiring context from the rest of the article.

Speakable Schema for Voice and AI Extraction

Speakable schema tags specific sections of your page as ideal for AI extraction. By applying CSS selectors (.direct-answer-block, .key-insight) and referencing them in WebPage Speakable schema, you give LLMs a roadmap to your most citable content.

6. The E-E-A-T Schema Stack for AI Search

| Schema Type | What It Signals | AI Citation Benefit | Critical Properties |

|---|---|---|---|

| Organization | Brand entity exists | Establishes entity anchor | sameAs, url, name |

| Person | Author expertise | Named authority attribution | knowsAbout, sameAs, jobTitle |

| BlogPosting / Article | Content type and freshness | Recency signal for citations | dateModified, author, keywords |

| FAQPage | Structured Q&A pairs | Direct extraction target | All FAQ Q&A populated exactly |

| Speakable | Preferred extraction zones | Marks best extraction targets | CSS selectors pointing to content |

| BreadcrumbList | Site structure | Navigation and context | All levels populated |

7. Robots.txt: The E-E-A-T Signal You're Probably Blocking

If your robots.txt is blocking AI crawlers, none of your E-E-A-T work matters. The bot simply can't reach the content. Check your robots.txt and add explicit Allow directives for these AI user agents:

User-agent: OAI-SearchBot

Allow: /

User-agent: ChatGPT-User

Allow: /

User-agent: PerplexityBot

Allow: /

User-agent: Google-Extended

Allow: /

User-agent: ClaudeBot

Allow: /

User-agent: anthropic-ai

Allow: /This is especially critical if you're using older security plugins or CDN configurations that default to blocking non-standard bots.

8. IndexNow: Getting Your Updated Content to LLMs Fast

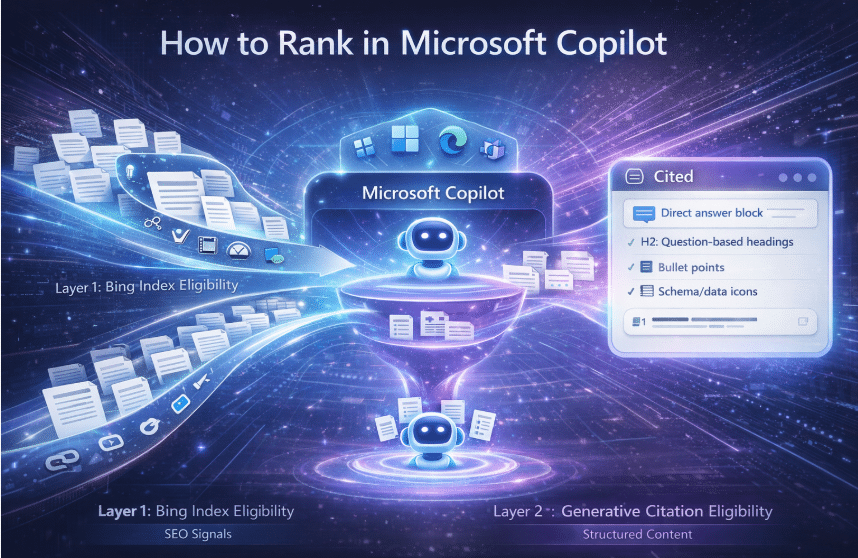

Freshness is a measurable E-E-A-T signal for AI search. Pages updated within two months earn 28% more AI citations than older content. The challenge: ChatGPT's live-web search is powered by Bing's index, and Bing's passive crawl can take weeks.

IndexNow solves this. When you publish or update a page, IndexNow sends an immediate ping to Bing, telling it to recrawl that URL. This means your fresh E-E-A-T signals reach ChatGPT search queries within hours instead of weeks. IndexNow is available through Cloudflare's integration or directly through Yoast SEO (version 19.0+) and Rank Math.

9. Tracking Your E-E-A-T Performance in AI Search

You cannot optimize what you cannot measure. Here's the weekly audit process for E-E-A-T for AI search:

- Run weekly prompt audits: Type your target queries directly into ChatGPT, Gemini, Perplexity, and Microsoft Copilot. Note which sources get cited. If competitors appear and you don't, their E-E-A-T signals are stronger.

- Track brand mention velocity: Use monitoring tools to track unlinked brand mentions across the web. Increasing mention frequency signals growing entity authority.

- Audit schema for errors: Use Google's Rich Results Test and Schema.org validator to confirm your structured data is rendering correctly. Schema errors silently kill extraction trust.

- Check author entity health: Search for your key authors by name across Google, ChatGPT, and Gemini. If LLMs describe them inaccurately or don't recognize them, your Person schema and entity building needs attention.

- Refresh content quarterly: Update your top E-E-A-T pages every 90 days minimum. Change

dateModifiedin schema only when actual content changes occur. False freshness signals erode trust.

Keyword Mapping Table

| Keyword | Intent | Content Section |

|---|---|---|

| E-E-A-T for AI search | Core concept definition | Section 1, Direct Answer Block |

| trust signals for LLMs | How AI evaluates sources | Section 2 (3-layer hierarchy) |

| author entity optimization | Personal authority building | Section 3 (Person schema) |

| E-E-A-T signals 2026 | Current best practices | Sections 3-5 |

| AI search authority signals | Evidence trust tactics | Section 4 (co-citation) |

| LLM citation optimization | Structural formatting | Section 5 (extraction trust) |

| entity SEO for AI | Knowledge graph strategy | Section 3 |

| Person schema markup | Technical implementation | Section 3 (schema table) |

Common E-E-A-T Mistakes in AI Search

| Mistake | Why It Hurts | Fix |

|---|---|---|

| Anonymous authorship | LLMs can't verify expertise without a named entity | Add author bylines with Person schema and credentials |

| Generic Organization schema | No sameAs links = no cross-reference = low entity confidence | Add Wikidata, LinkedIn, Crunchbase in sameAs array |

| No direct answer block | LLMs skip pages they can't extract a clean answer from | Add a 2-4 sentence direct answer in the first 200 words |

| Blocking AI crawlers in robots.txt | Content is invisible to AI indexing entirely | Add explicit Allow for OAI-SearchBot, PerplexityBot, ClaudeBot |

| Stale content without dateModified | LLMs deprioritize old sources for current queries | Update content and schema dateModified every 90 days |

| No co-citation strategy | Entity never associated with topical authority clusters | Target mentions in industry publications alongside recognized experts |

| FAQPage schema not matching content | LLMs detect mismatches and reduce extraction confidence | Populate schema from the exact text of your FAQ section |

Article Summary

- E-E-A-T for AI search operates at the brand and author level. LLMs evaluate whether you're a trusted entity before they evaluate your content.

- The 3-layer trust hierarchy - Entity Trust, Evidence Trust, Extraction Trust - must all be strong for consistent AI citations.

- Organization schema with

sameAslinks to Wikidata, LinkedIn, and Crunchbase establishes your brand entity in knowledge graphs that LLMs use as truth anchors. - Person schema with

knowsAbout,jobTitle, andsameAsproperties is the highest-leverage author authority signal. Named authorship outperforms anonymous content in AI-generated answers. - Co-citation - being mentioned alongside recognized authorities - seeds your brand entity into AI training data and topical authority clusters.

- Extraction trust requires a direct answer block, FAQPage schema, and optionally Speakable schema with CSS selectors.

- AI crawlers must be explicitly allowed in robots.txt: OAI-SearchBot, ChatGPT-User, PerplexityBot, Google-Extended, ClaudeBot, and anthropic-ai.

- IndexNow via Bing ensures your updated E-E-A-T signals reach ChatGPT search queries within hours, not weeks.

- Content with statistics and named author credentials earns 30-40% higher visibility in AI responses. Pages updated within two months earn 28% more citations.

- Weekly prompt audits across ChatGPT, Gemini, Perplexity, and Copilot are the only reliable way to track E-E-A-T performance in AI search.

Frequently Asked Questions

What is E-E-A-T for AI search and how is it different from Google E-E-A-T?

E-E-A-T for AI search refers to the trust and authority signals that LLMs like ChatGPT, Gemini, and Perplexity evaluate when deciding whether to cite your content in generated answers. While Google's original E-E-A-T framework primarily influences page rankings in traditional search results, E-E-A-T for AI search is a citation decision, not a ranking decision. AI systems either include you as a source or they don't. The evaluation includes entity verification through knowledge graphs, author credential cross-referencing, and structured data checks that traditional Google rankings don't require in the same way.

Which E-E-A-T signals matter most for getting cited by ChatGPT?

For ChatGPT citations, entity trust is the highest-priority signal. ChatGPT's live-web browsing pulls from Bing's index, so your Organization schema, Bing-indexed content, and IndexNow implementation all directly affect citation availability. Named authorship with verifiable Person schema, a direct answer block in the first 200 words, and explicit Allow directives for OAI-SearchBot and ChatGPT-User in your robots.txt are the core requirements. Content without a clear direct answer structure is rarely extracted even from well-indexed pages.

How long does it take to build E-E-A-T for AI search?

Entity trust signals like Organization schema and Knowledge Panel eligibility can take 3-6 months to establish and propagate through LLM training data. Evidence trust through co-citation campaigns produces measurable results in 60-90 days for current AI Overviews and live-web searches. Extraction trust through structured data and content formatting can take effect within days of implementation. The full E-E-A-T for AI search picture typically builds over 6 months of consistent investment across all three layers.

Do I need a Wikipedia page for E-E-A-T in AI search?

You don't strictly need a Wikipedia page, but Wikipedia significantly accelerates entity trust for AI search. LLMs use Wikipedia as a primary truth anchor, and brands with Wikipedia entries are more likely to have Knowledge Panels, which AI systems treat as hard facts. If your brand doesn't qualify for a Wikipedia entry, Wikidata is a direct alternative. A Wikidata entry with proper sameAs schema references gives LLMs most of the entity verification benefit without the editorial requirements of Wikipedia.

What schema markup is most important for E-E-A-T in AI search?

The highest-priority schema types for E-E-A-T in AI search are: BlogPosting or Article schema (with dateModified, author, and keywords), FAQPage schema (populated exactly from your FAQ content), and Person schema for authors (with knowsAbout, sameAs, and jobTitle). Organization schema with sameAs links to external authority sources provides the brand entity anchor. Speakable schema with CSS selectors targeting your direct answer block and key insights rounds out the stack by marking your most citable content for AI extraction.