ANSWER ENGINE OPTIMIZATION | Updated April 2026 | 12 min read

What You'll Learn in This Guide

- What LLMO (large language model optimization) is and why it matters more than traditional SEO for AI visibility in 2026

- The LLMO Visibility Stack: a 5-layer framework for getting your content cited by AI engines

- How LLMO differs from GEO and AEO, and where each discipline fits in your strategy

- The 7 core LLMO ranking signals that determine whether ChatGPT, Gemini, and Perplexity cite your brand or your competitor

- Robots.txt directives and IndexNow setup for AI crawler access

- A weekly LLMO audit process you can run in under 30 minutes

- Co-citation and entity density tactics that make LLMs treat your brand as authoritative

- Common LLMO mistakes that silently kill your AI search visibility

LLMO, or large language model optimization, is the practice of structuring your content so AI systems like OpenAI's ChatGPT, Google's Gemini, and Perplexity can find, understand, and cite your brand in their generated answers. If your site gets traffic from Google but zero citations in AI-generated responses, you have an LLMO problem.

This guide breaks down exactly how large language model optimization works in 2026, covering the ranking signals AI engines actually use, the technical requirements you cannot skip, and a repeatable audit process that keeps your brand visible as these systems evolve. The strategies here apply across every major LLM platform: ChatGPT, Gemini, Perplexity, Anthropic's Claude, Microsoft Copilot, and Grok.

Direct Answer: LLMO Large Language Model Optimization

LLMO (large language model optimization) is the process of making your content discoverable, understandable, and citable by AI systems like ChatGPT, Gemini, and Perplexity. Unlike traditional SEO, which targets search engine rankings, LLMO focuses on earning AI citations through clear entity signals, structured data, factual density, and cross-source brand consistency. Brands that implement LLMO see up to 4.4x higher conversion rates from AI traffic compared to traditional organic search visitors.

1. What Is LLMO and Why Does It Matter in 2026?

Large language model optimization is the foundational discipline beneath both GEO (Generative Engine Optimization) and AEO (Answer Engine Optimization). Where GEO focuses on appearing in AI-generated search results and AEO targets direct answer features, LLMO addresses the root question: can an LLM even find, parse, and trust your content in the first place?

The numbers make the urgency clear. Gartner projects that 25% of traditional search traffic will shift to AI chatbots and virtual assistants by late 2026. AI-driven visitors convert at 4.4x the rate of traditional organic visitors, according to Ahrefs internal data. And in certain B2B segments, LLM-referred traffic converts at rates 6x to 27x higher than standard search.

If your content is invisible to large language models, you are losing the highest-converting traffic channel available right now. LLMO fixes that.

2. LLMO vs GEO vs AEO: What Is the Difference?

Marketers throw around LLMO, GEO, and AEO as if they are interchangeable. They are not. Each operates at a different layer of the AI search stack.

| Discipline | Primary Focus | Target Platforms | Key Metric |

|---|---|---|---|

| LLMO (Large Language Model Optimization) | Making content citable by any LLM | All LLMs: ChatGPT, Gemini, Claude, Perplexity, Copilot, Grok | Citation rate across AI platforms |

| GEO (Generative Engine Optimization) | Appearing in AI-generated search results | Google AI Overviews, Bing Copilot, Perplexity | AI Overview inclusion rate |

| AEO (Answer Engine Optimization) | Winning direct answer positions | Featured snippets, voice assistants, AI answer boxes | Direct answer win rate |

Think of it this way: LLMO is the foundation. It makes your content machine-readable and trustworthy to any language model. GEO applies LLMO principles specifically to generative search interfaces. AEO applies them to direct answer extraction. You need all three, but LLMO comes first because without it, GEO and AEO cannot function.

KEY INSIGHT: LLMO is not a replacement for SEO. It is a parallel discipline. Traditional SEO gets your pages indexed and ranked. LLMO gets your content cited when AI systems generate answers. The brands winning in 2026 run both simultaneously.

3. The LLMO Visibility Stack: A 5-Layer Framework

The LLMO Visibility Stack is a prioritized framework for large language model optimization. Work from the bottom up. Skipping layers guarantees failure.

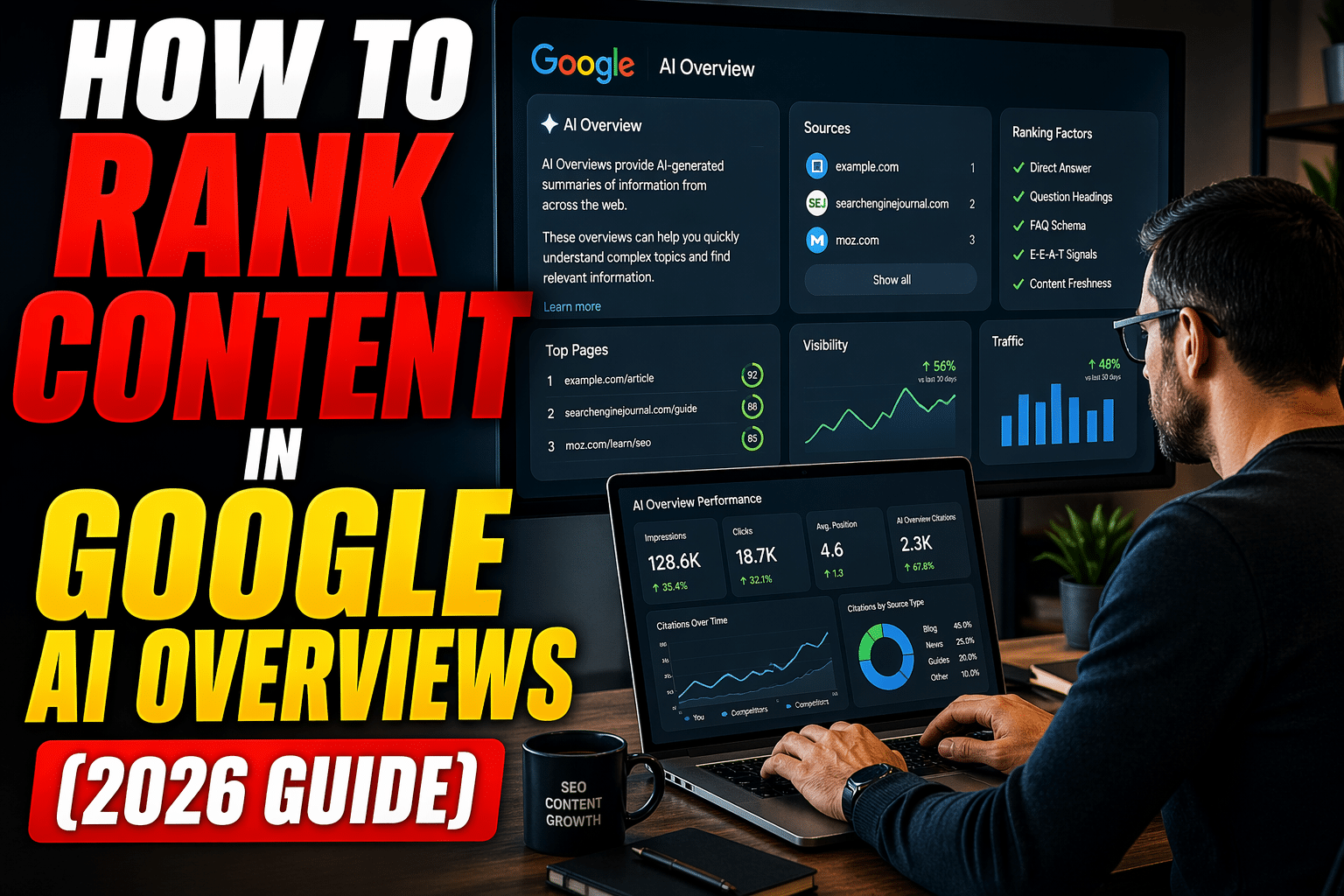

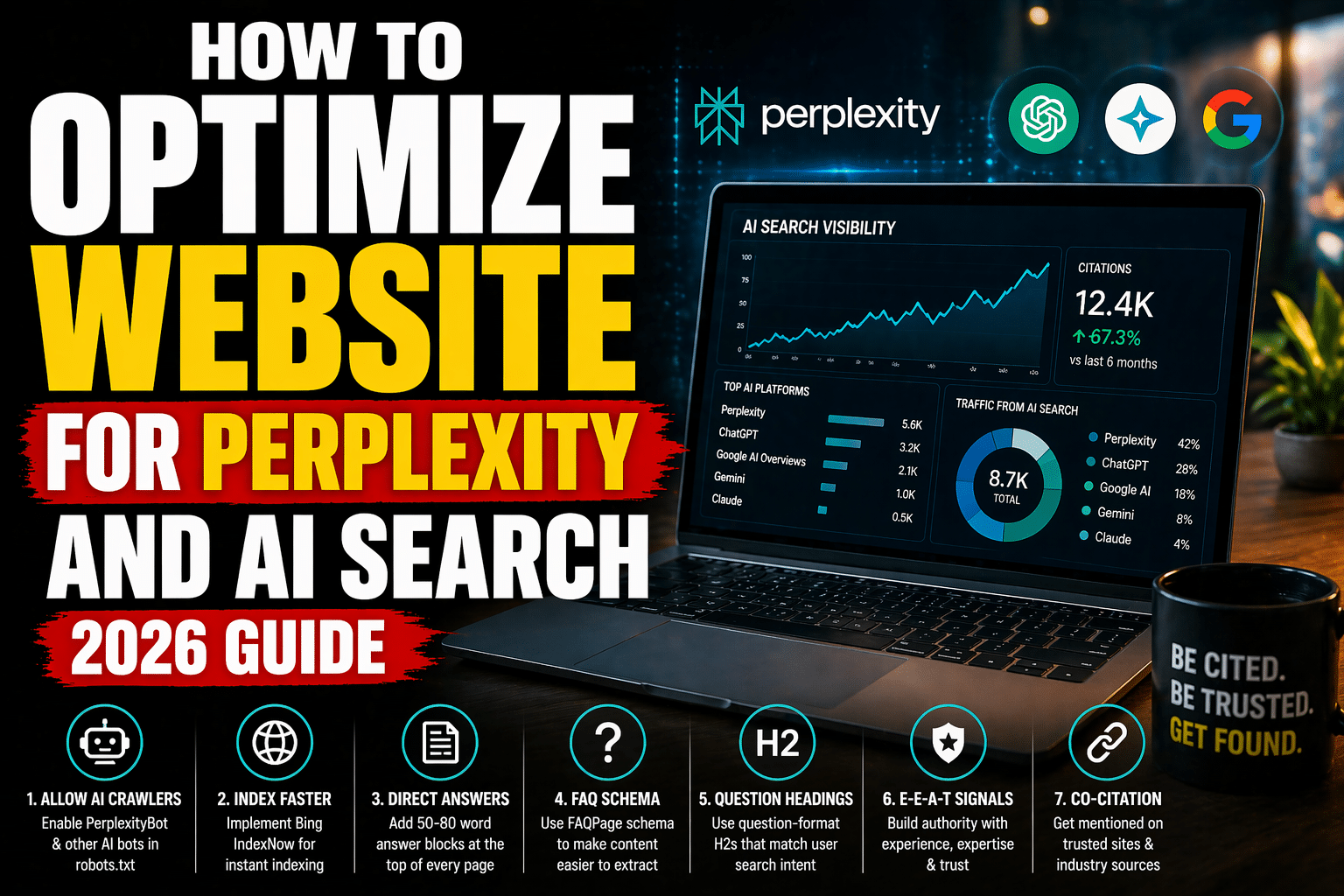

Layer 1: Technical Access

LLMs cannot cite content they cannot crawl. Your robots.txt must explicitly allow AI bots. Your sitemap must be current. IndexNow must be active so new and updated content reaches Bing's index (and by extension, ChatGPT's search) within minutes, not weeks.

Layer 2: Content Structure

LLMs extract answers from content that is structured for machine parsing. That means clear H2/H3 hierarchies, direct answer blocks within the first 300 words, FAQ sections with complete answers, and comparison tables for any multi-option topic. Vague, wall-of-text content gets ignored.

Layer 3: Entity Authority

LLMs verify claims by cross-referencing entities. Your content must name specific tools, platforms, protocols, and people rather than using vague references. When you write about E-E-A-T signals, name Google's exact framework. When you reference a protocol, say "Microsoft Bing's IndexNow" the first time.

Layer 4: Co-Citation Density

Large language models determine brand authority partly through co-citation, which is how frequently your brand appears alongside trusted sources across the web. If Wikipedia, Reddit threads, industry publications, and YouTube descriptions all mention your brand in the same context as a topic, LLMs assign higher trust.

Layer 5: Freshness Signals

LLMs strongly favor recent content. Your dateModified schema must be accurate. Your content refresh cycle should be quarterly at minimum. Stale content with outdated statistics gets deprioritized in AI-generated answers, even if it ranks well in traditional search.

4. The 7 Core LLMO Ranking Signals

Based on extensive prompt testing across ChatGPT, Gemini, Perplexity, and Claude, these seven signals most directly influence whether an LLM cites your content:

- Crawl Accessibility: AI bots can reach and parse your pages without restrictions

- Direct Answer Presence: A clear, extractable answer within the first 300 words

- Factual Density: Specific numbers, named entities, and verifiable claims per paragraph

- Schema Markup Completeness: BlogPosting, FAQPage, and Speakable schema properly implemented

- Source Consensus: Your brand is mentioned across multiple independent sources for the same topic

- Content Freshness: dateModified within the last 90 days, with updated statistics and references

- Reading Mode Compatibility: Clean HTML structure that renders correctly in reader views and AI parsing tools

CRITICAL RULE: If your robots.txt blocks OAI-SearchBot, ChatGPT-User, PerplexityBot, ClaudeBot, or Google-Extended, no amount of content optimization will earn you AI citations. Fix technical access first.

5. Technical Setup for LLMO: Robots.txt and IndexNow

Every large language model optimization strategy starts with technical SEO for AI crawlers. Here is the minimum required setup.

Robots.txt AI Bot Directives

Add these directives to your robots.txt file:

User-agent: OAI-SearchBot

Allow: /

User-agent: ChatGPT-User

Allow: /

User-agent: PerplexityBot

Allow: /

User-agent: Google-Extended

Allow: /

User-agent: ClaudeBot

Allow: /

User-agent: anthropic-ai

Allow: /IndexNow Implementation

Because ChatGPT's live-web browsing is powered by Bing's index, you cannot wait for passive crawling. Implement the IndexNow protocol to ping Bing the moment you publish or update a page. This makes your content immediately retrievable for ChatGPT search queries. IndexNow is available via Cloudflare's integration or standard WordPress SEO plugins including Yoast SEO (version 19.0+) and Rank Math.

6. Schema Markup Strategy for LLMO

Schema markup tells LLMs exactly what your content is, who wrote it, when it was last updated, and which sections are most important. For large language model optimization, three schema types are non-negotiable:

| Schema Type | Best Used For | AI Citation Benefit | Critical Properties |

|---|---|---|---|

| BlogPosting / Article | Every content page | Establishes content type, author, and freshness | headline, dateModified, author, description |

| FAQPage | Pages with Q&A sections | Direct extraction target for AI answers | name (question), acceptedAnswer |

| Speakable | Summary and direct answer sections | Marks content as AI voice extraction ready | cssSelector targeting .direct-answer-block |

CRITICAL RULE: Never use generic schema templates. Every JSON-LD block must be populated with the actual content on that specific page. LLMs cross-reference schema claims against page content, and mismatches reduce trust scores.

7. Co-Citation Strategy for LLMO

Co-citation is the strongest off-page signal in large language model optimization. When multiple independent sources mention your brand in the same topical context, LLMs treat that brand as authoritative for that topic.

Here is how to build co-citation density:

- Earn editorial mentions in industry publications that cover your topic area

- Build a presence on Reddit in relevant subreddits where your expertise applies

- Create YouTube content that names your brand alongside the topics you want to own

- Contribute to Wikipedia where appropriate (verifiable sourcing, not self-promotion)

- Partner with complementary brands so their content references yours and vice versa

- Maintain consistent NAP and entity information across all platforms and directories

The goal is simple: when an LLM processes the question "Who is an authority on [your topic]?", it should find your brand mentioned across five or more independent sources.

8. How to Write Content That LLMs Actually Cite

Content that earns LLMO citations follows a specific pattern. Here is the formula:

Open with the answer. Place a direct, complete answer to the page's primary query within the first 200 words. This is what LLMs extract first.

Use factual density over filler. Every paragraph should contain at least one specific claim: a number, a named entity, a dated reference, or a process step. LLMs skip paragraphs that contain only opinions or generalizations.

Structure for extraction. Use H2 headings that match actual search queries. Use comparison tables for multi-option topics. Use numbered lists for processes. Each structural element is a potential extraction point for AI systems.

Name everything specifically. Write "Google's Gemini 1.5 Pro" the first time, not "a popular AI tool." Entity specificity is a direct LLMO ranking signal.

Include FAQ sections. Frequently Asked Questions formatted as clear Q&A pairs are the second-most-extracted content type after direct answer blocks. Every piece of content you publish should end with 4 to 7 FAQ pairs that match real search queries.

9. Measuring LLMO Performance: The Weekly Audit Process

You cannot improve what you do not measure. Use this weekly AI search visibility audit process to track your LLMO performance:

- Build a seed prompt library of 15 to 25 questions your target audience asks about your topics

- Run each prompt through ChatGPT, Gemini, Perplexity, and Claude every Monday

- Record citation status for each prompt: Cited (with link), Mentioned (brand name only), or Absent

- Track competitors using the same prompts to identify who is getting cited instead of you

- Flag content gaps where you are absent and a competitor is cited

- Prioritize updates to pages targeting prompts where you dropped from Cited to Absent

- Update dateModified schema on every page you refresh

KEY INSIGHT: LLMO measurement is fundamentally different from traditional rank tracking. There is no "position 1." You are either cited, mentioned, or absent. Track all three states weekly to catch drops before they compound.

10. Common LLMO Mistakes That Kill AI Visibility

| Mistake | Why It Hurts | Fix |

|---|---|---|

| Blocking AI bots in robots.txt | LLMs cannot crawl or cite blocked content | Add explicit Allow directives for all AI user agents |

| No direct answer block | LLMs skip pages without a clear extractable answer | Add a 2 to 4 sentence direct answer within the first 300 words |

| Vague, opinion-only writing | LLMs prioritize factual density over subjective content | Include specific numbers, named entities, and dated references in every paragraph |

| Generic or missing schema | LLMs cannot verify content type, authorship, or freshness | Implement BlogPosting + FAQPage + Speakable schema with accurate, page-specific data |

| Passive Bing crawling | ChatGPT search relies on Bing's index; slow indexing means invisible content | Implement IndexNow protocol via Rank Math or Cloudflare |

| No co-citation presence | LLMs cannot verify brand authority without independent third-party mentions | Build editorial, Reddit, YouTube, and partner brand mentions across 5+ sources |

| Zero information gain | LLMs prefer content that adds unique value over regurgitated generic advice | Include original frameworks, specific process breakdowns, or proprietary data points |

| Outdated content (6+ months stale) | LLMs deprioritize content with old dateModified signals | Refresh key pages quarterly and update dateModified schema each time |

Article Summary

Here are the key takeaways from this LLMO guide:

- LLMO (large language model optimization) is the practice of making your content findable, parsable, and citable by AI systems like ChatGPT, Gemini, Perplexity, Claude, and Copilot

- LLMO is the foundational layer beneath GEO and AEO; without it, neither discipline can function

- AI-referred traffic converts at 4.4x the rate of traditional organic search, making LLMO a revenue priority

- The LLMO Visibility Stack has 5 layers: Technical Access, Content Structure, Entity Authority, Co-Citation Density, and Freshness Signals

- Seven core LLMO ranking signals determine citation outcomes: crawl accessibility, direct answer presence, factual density, schema completeness, source consensus, content freshness, and reading mode compatibility

- Robots.txt must explicitly allow OAI-SearchBot, ChatGPT-User, PerplexityBot, ClaudeBot, and Google-Extended

- IndexNow is mandatory for ChatGPT visibility because ChatGPT search runs on Bing's index

- Co-citation across 5+ independent sources is the strongest off-page LLMO signal

- Schema markup (BlogPosting + FAQPage + Speakable) must be page-specific, never generic

- Run a weekly 30-minute LLMO audit using seed prompts across all major LLM platforms to track citation status

- Content freshness with quarterly updates and accurate dateModified schema prevents AI visibility decay

Frequently Asked Questions

What is LLMO in SEO?

LLMO stands for large language model optimization. It is the practice of structuring your content so AI systems like OpenAI's ChatGPT, Google's Gemini, and Perplexity can discover, understand, and cite your brand in their generated responses. LLMO focuses on citations rather than traditional search rankings, making it a distinct discipline from conventional SEO. The core tactics include direct answer blocks, entity-rich writing, schema markup, co-citation building, and AI crawler access.

How is LLMO different from GEO and AEO?

LLMO is the foundational layer that makes content machine-readable and trustworthy to any large language model. GEO (Generative Engine Optimization) applies LLMO principles specifically to AI-powered search interfaces like Google AI Overviews and Perplexity. AEO (Answer Engine Optimization) focuses on winning direct answer positions in featured snippets and voice assistant responses. You need all three disciplines, but LLMO must be implemented first because GEO and AEO depend on it.

How do I optimize my content for large language models?

Start with technical access: ensure your robots.txt allows AI crawlers (OAI-SearchBot, ChatGPT-User, PerplexityBot, ClaudeBot, Google-Extended). Then structure your content with a direct answer block in the first 300 words, clear H2/H3 hierarchies, FAQ sections, and comparison tables. Implement BlogPosting, FAQPage, and Speakable schema. Build co-citation density across at least five independent sources. Finally, maintain a quarterly content refresh cycle with accurate dateModified schema.

Does LLMO replace traditional SEO?

No. LLMO and traditional SEO are parallel disciplines that serve different distribution channels. Traditional SEO gets your pages indexed and ranked in search engine results pages. LLMO gets your content cited when AI systems generate answers. The brands seeing the best results in 2026 run both programs simultaneously, because many LLMO best practices (structured content, schema markup, E-E-A-T signals) also improve traditional search performance.

How do I measure LLMO success?

Build a seed prompt library of 15 to 25 questions your audience asks about your topics. Run each prompt weekly through ChatGPT, Gemini, Perplexity, and Claude. Record whether your brand is Cited (with a link), Mentioned (brand name only), or Absent for each prompt. Track these states over time to identify citation trends, content gaps, and competitive shifts. There is no "position 1" in LLMO; the metric is citation presence across platforms.

What is the most important LLMO ranking signal?

Based on prompt testing across major LLM platforms, crawl accessibility and direct answer presence are the two highest-impact signals. If AI bots cannot crawl your site, nothing else matters. Once access is established, having a clear, extractable direct answer within the first 300 words of your content is the single factor most correlated with earning AI citations. After these two, co-citation density and content freshness are the next strongest signals.

How long does it take to see results from LLMO?

Most brands that implement the full LLMO Visibility Stack, including technical access, content restructuring, schema markup, and co-citation building, begin seeing citation improvements within 4 to 8 weeks. Technical fixes (robots.txt and IndexNow) can produce results within days. Content restructuring and schema updates typically take 2 to 4 weeks to propagate through LLM training and retrieval systems. Co-citation building is the slowest layer, often requiring 2 to 3 months of consistent effort before LLMs recognize the pattern.