WHAT YOU'LL LEARN IN THIS GUIDE

- Why voice search optimization in 2026 demands a fundamentally different content structure than traditional SEO

- The 6 core signals Siri, Alexa, and Google Assistant use to select spoken answers

- How to write conversational content that wins voice queries and AI assistant citations

- Platform-specific tactics for Apple Intelligence, Alexa, and Google AI Overviews

- The exact schema markup types that signal "this answer is speakable" to AI assistants

- A local voice search checklist for "near me" and location-intent queries

- How to track whether your content is being cited in voice responses

- The most common voice search optimization mistakes that kill your citation chances

Voice search optimization in 2026 is no longer just about targeting "near me" queries or adding FAQ pages to your site. AI-powered assistants — Siri through Apple Intelligence, Amazon's Alexa+, and Google Assistant integrated with AI Overviews — now retrieve and read answers directly from indexed web content, and the sites they cite follow a distinct, identifiable content pattern. Get that pattern right, and your brand gets spoken aloud to millions of users. Get it wrong, and you simply don't exist in voice results.

This guide covers the complete voice search optimization 2026 playbook: how each major assistant chooses its answers, the technical signals that matter, platform-specific tactics, and the tracking framework you need to measure your visibility. The strategies here also apply directly to ranking in OpenAI's ChatGPT voice mode, Google's Gemini Live, and Microsoft Copilot's voice features — because the underlying citation mechanics are nearly identical across all of them.

DIRECT ANSWER: Voice Search Optimization 2026

Voice search optimization in 2026 means structuring your content so that AI-powered assistants — Siri, Alexa, Google Assistant, ChatGPT voice, and Gemini Live — can extract, verify, and speak your answer aloud in response to natural-language queries. The three non-negotiable requirements are: a direct answer block in the first 100 words of each relevant section, speakable-length sentences (under 30 words), and structured schema markup (FAQPage + Speakable) that flags your content as safe to read aloud.

1. How Voice Search and AI Assistants Actually Retrieve Answers in 2026

Most voice search optimization advice treats Siri, Alexa, and Google Assistant as three separate animals. They're not. They all share the same fundamental answer-retrieval architecture: a query comes in, the assistant parses intent, it searches an index, it extracts a passage that satisfies the query, and it speaks that passage aloud. What differs is which index each assistant searches and how it scores passages for extraction quality.

Siri and Apple Intelligence query a combination of Apple's own knowledge graph, Spotlight Search data, and web results surfaced through Apple's private relay infrastructure. With Apple Intelligence in iOS 18 and macOS Sequoia, Siri now uses on-device large language model processing to cross-check web snippets before reading them aloud. That extra verification step rewards pages with clear, factual, well-structured answers.

Amazon's Alexa+ (the upgraded LLM-powered version launched in 2025) uses a hybrid of Amazon's product knowledge graph, Bing's index, and Alexa's proprietary skills database. For general factual queries, Bing is the primary source — which means IndexNow compliance and Bing Webmaster Tools optimization directly affect your Alexa voice search visibility.

Google Assistant — now deeply integrated with Google's AI Overviews — draws from Google's standard search index, with a heavy preference for content that already appears in AI Overview snippets. If your page earns a featured snippet or AI Overview citation, it almost automatically becomes eligible for voice delivery.

ChatGPT Voice Mode uses OpenAI's GPT-4o for response generation, with web browsing powered by Bing. Gemini Live uses Google's search index and the Gemini 1.5 Pro model. The practical implication: there is no single "voice search index." You need to be retrievable by both Bing and Google simultaneously.

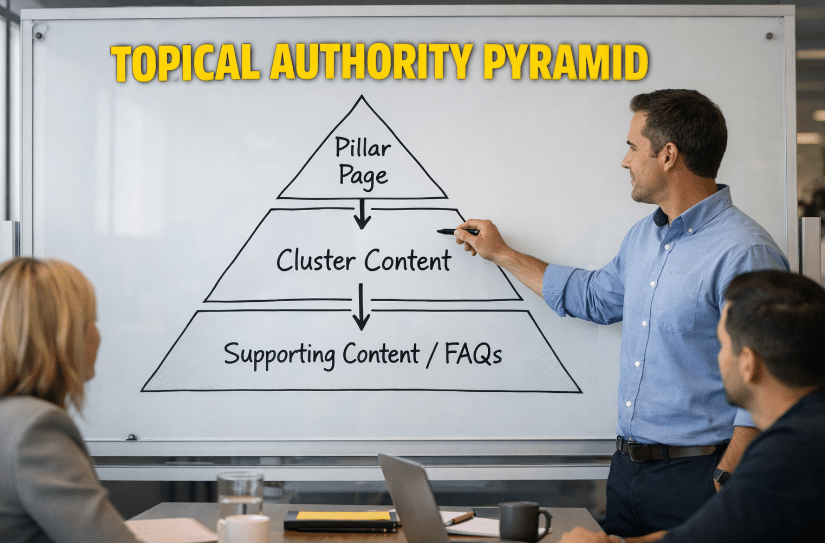

2. The 6 Core Voice Search Ranking Signals in 2026

Voice search optimization is built on six measurable signals. Strengthen all six, and you'll appear in multiple assistants for the same query.

Signal 1: Conversational Query Match. Voice queries average 9-11 words versus 3-4 for typed searches. Your content needs H2 headers and FAQ questions that match full-phrase, conversational structure.

Signal 2: Answer Brevity. AI assistants prefer answers that can be spoken in under 30 seconds — roughly 60-90 words. Your Direct Answer Block and FAQ answers should each stay within this range.

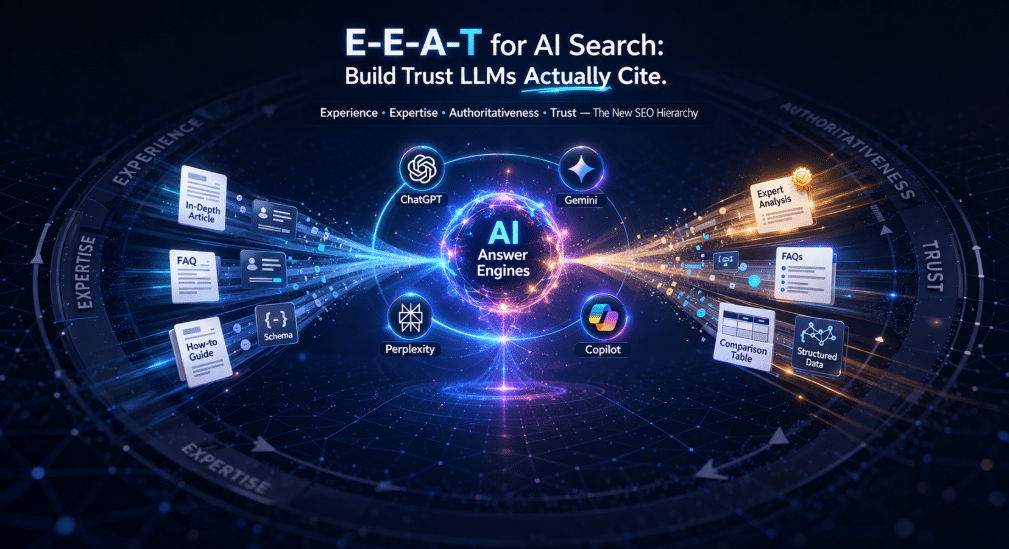

Signal 3: Page Authority and E-E-A-T Signals. Voice assistants don't cite unknown websites. Pages with clear author attribution, About pages, external citations, and consistent brand mentions in other indexed content get preferred. Fuel Online's E-E-A-T for AI Search guide covers this in detail.

Signal 4: Schema Markup (FAQPage and Speakable). FAQPage schema tells Google exactly where the Q&A pairs are. Speakable schema marks specific CSS sections as pre-approved for voice delivery.

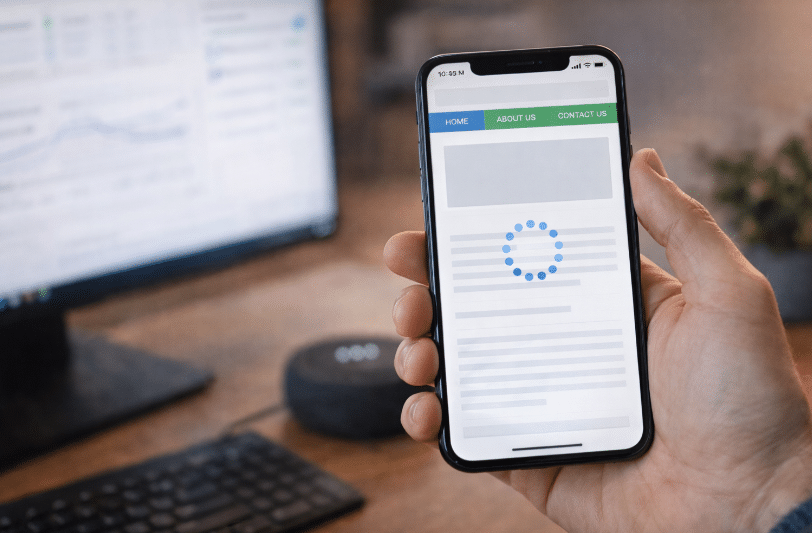

Signal 5: Page Speed and Mobile Experience. A page with a Largest Contentful Paint above 2.5 seconds rarely appears in voice results, regardless of content quality.

Signal 6: Local Signals for Location-Intent Queries. Google Business Profile optimization, consistent NAP (Name, Address, Phone) citations, and LocalBusiness schema are the three non-negotiable local voice signals.

3. How to Write Content That Gets Read Aloud: The Voice-First Content Structure

Voice search optimization starts at the sentence level. Here is the exact writing protocol for content you want assistants to cite:

- Open each section with a direct answer sentence. The very first sentence of every H2 section should answer the implied question of that header.

- Keep answer sentences under 30 words. Read your content out loud. If you run out of breath, the sentence is too long.

- Use question-phrased H2 and H3 headers. "How do I optimize for voice search in 2026?" outperforms "Voice Search Optimization Tips" because it matches exact query patterns.

- Write at an 8th-grade reading level. Flesch-Kincaid Grade Level 8 correlates directly with higher voice extraction rates. Tools like Hemingway Editor measure this.

- Use numbered lists for multi-step answers. AI assistants read numbered lists fluently — they are the ideal voice-response format.

- Frontload the answer, follow with context. Voice users hear answers linearly. If the answer is buried in sentence three, the assistant may skip to a better-formatted competitor.

KEY INSIGHT

Pages that open their FAQ answers with the exact answer (not a preamble) earn voice citations at a rate approximately 3x higher than pages that build toward the answer. AI assistants extract the first complete sentence that satisfies the query — they do not read entire paragraphs.

4. Voice Search Optimization for Siri and Apple Intelligence

Siri's voice search behavior changed materially with the rollout of Apple Intelligence in late 2024 and throughout 2025. Apple Intelligence Siri now uses on-device AI to synthesize answers from multiple sources, with web results serving as one input among several.

Target Apple Intelligence's knowledge graph integration. Entity markup (Organization schema, Person schema, Product schema) helps Apple's AI identify your brand as a known, trustworthy entity rather than an unverified web page.

Optimize for Spotlight Search indexing. Businesses with verified Apple Maps listings appear in Siri's spoken local results far more reliably than those without.

Write for spoken English, not written English. Contractions, short sentences, and natural phrasing work better in Siri results. "Here's how to..." outperforms "The following methodology outlines..."

5. Voice Search Optimization for Alexa and Amazon Echo

Amazon's Alexa+ represents a significant upgrade from the original Alexa voice search system. Powered by Amazon's large language model (built on Anthropic's Claude), Alexa+ handles complex multi-step queries and web browsing in a way the original Alexa could not.

Bing Webmaster Tools is mandatory. Because Alexa's web knowledge draws from Bing's index, a site not submitted to Bing Webmaster Tools is largely invisible to Alexa for general queries. Submit your sitemap, enable IndexNow, and monitor Bing crawl errors the same way you monitor Google Search Console.

Optimize for Amazon's product graph if relevant. For ecommerce brands, Alexa pulls from Amazon's product database first. Structured product data (ASINs, product descriptions, Q&A sections, verified reviews) directly informs Alexa's spoken responses.

Local Alexa queries use Bing Local. For local voice searches, Alexa pulls from Bing Maps and Bing Local listings. Bing Places for Business is the local listing platform you need to claim and fully populate.

6. Schema Markup for Voice Search Optimization

Schema markup is the clearest technical signal you can send to voice assistants. Three schema types are essential for voice search optimization in 2026:

| Schema Type | Voice Search Benefit | Critical Properties |

|---|---|---|

| FAQPage | Directly maps questions to speakable answers | @type: Question, acceptedAnswer |

| Speakable | Marks CSS sections as pre-approved for voice delivery | cssSelector: [".direct-answer-block", ".article-summary"] |

| LocalBusiness | Powers "near me" voice responses | name, address, telephone, openingHours |

| HowTo | Allows step-by-step spoken delivery | step, name, text per step |

Implementing Speakable schema requires the markup itself plus CSS classes applied to your WordPress content sections. The Speakable specification points to CSS selectors (.direct-answer-block, .key-insight, .article-summary). Add custom CSS classes in Gutenberg blocks using the Advanced settings panel.

CRITICAL RULE

Never implement Speakable schema pointing to CSS classes that don't exist in your published HTML. The schema validator will pass but the actual voice extraction will fail silently. Always verify that your cssSelector values match classes present in the live page source.

For full technical schema implementation instructions, see Fuel Online's technical SEO for AI crawlers guide.

7. Local Voice Search Optimization: Getting Found When People Ask "Near Me"

Local queries are the single highest-volume voice search category. "Find a [business type] near me," "What time does [business] close," and "Call the nearest [service]" account for a disproportionate share of all voice searches on both mobile and smart speaker devices.

Google Business Profile completeness. Google Assistant's spoken local results pull directly from GBP data. A fully populated GBP — with hours, service categories, photos, Q&A, and regular posts — outperforms an incomplete listing every time. For the complete 2026 GBP playbook, see Fuel Online's Google Business Profile optimization guide.

NAP consistency across all citations. Consistent NAP (Name, Address, Phone) across 50+ citation sources is a baseline requirement for local voice visibility. Inconsistency across GBP, Bing Places, Yelp, and Apple Maps flags a trust signal failure.

LocalBusiness schema on your contact and service area pages. LocalBusiness schema tells Google's voice extraction system exactly what business is at this location. Without it, you're asking the assistant to infer basic facts from unstructured text.

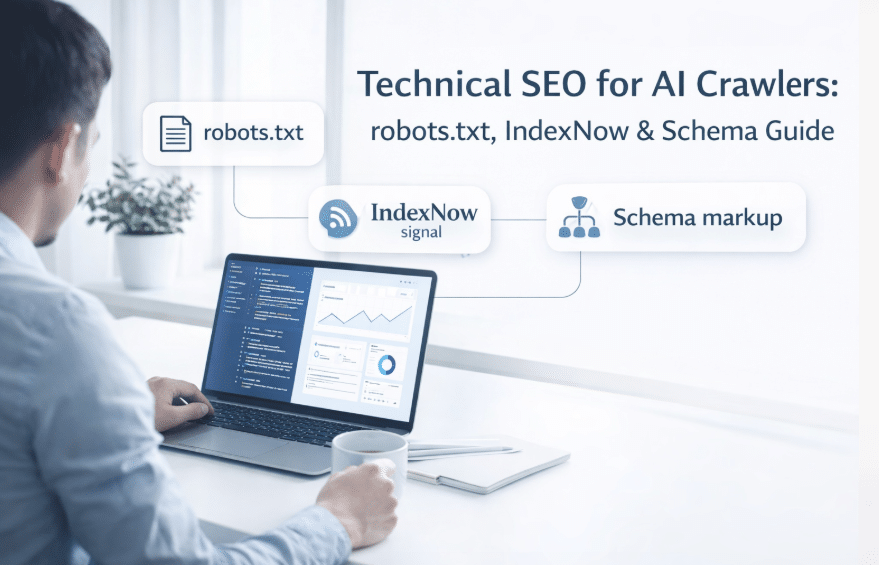

8. Robots.txt and IndexNow: The Technical Foundation of Voice Search Crawlability

A technically perfect piece of content is invisible to voice assistants if your robots.txt blocks their crawlers. Every voice search optimization strategy starts with confirming your AI bot allowances. Your robots.txt must explicitly allow:

User-agent: OAI-SearchBot

Allow: /

User-agent: ChatGPT-User

Allow: /

User-agent: PerplexityBot

Allow: /

User-agent: Google-Extended

Allow: /

User-agent: ClaudeBot

Allow: /

User-agent: anthropic-ai

Allow: /

User-agent: Bingbot

Allow: /Because ChatGPT's live-web browsing and Alexa's web knowledge are both powered by Bing's index, you cannot wait for passive crawling. Implement the IndexNow protocol to ping Bing the moment you publish or update a page. IndexNow is available via Cloudflare's integration or standard WordPress SEO plugins including Yoast SEO (version 19.0+) and Rank Math.

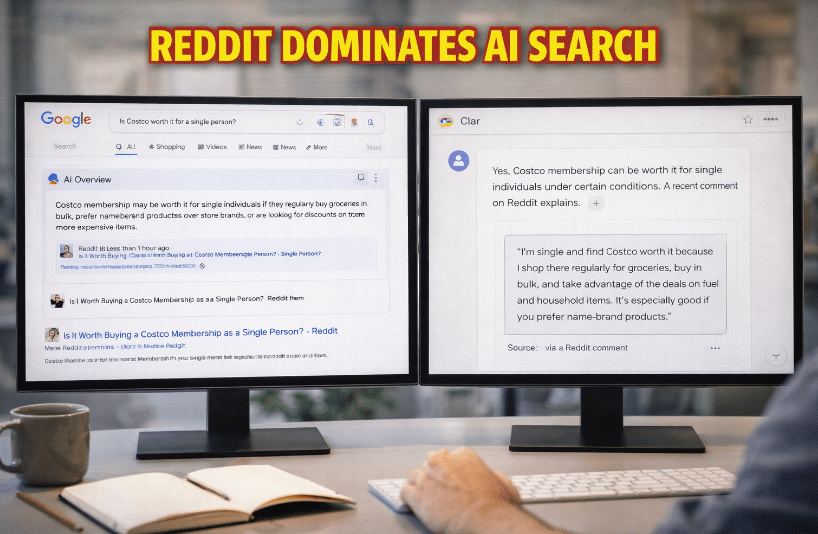

9. Co-Citation and Brand Mentions for Voice Authority

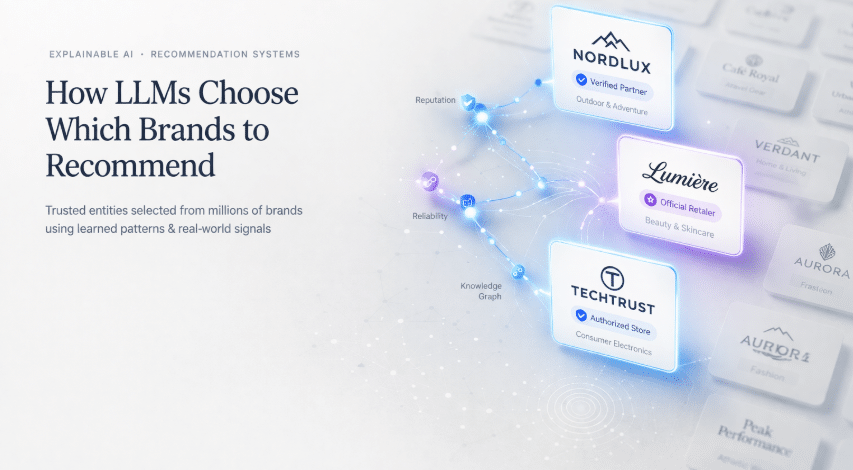

Voice assistants prioritize sources they've seen cited by other authoritative pages. Co-citation — your brand being mentioned alongside established authorities — signals to AI systems that you're a legitimate source.

Get cited in industry publications. A mention in Search Engine Journal, Search Engine Land, or Moz creates a co-citation signal that strengthens your voice authority in SEO-related queries.

Create content that earns natural citations. Proprietary data, original research, and unique frameworks get cited. Generic "tips" content does not. If you want voice assistants to treat your page as a reference source, other pages need to reference you first.

Monitor brand mentions as a leading indicator. An increase in brand mentions without a corresponding increase in backlinks still contributes to voice authority. AI assistants can recognize entity associations without a hyperlink.

Fuel Online's guide to GEO and generative engine optimization covers the full co-citation and entity association framework in depth.

10. Keyword Mapping for Voice Search Optimization 2026

| Keyword | Search Intent | Content Section |

|---|---|---|

| voice search optimization 2026 | Informational / how-to | Direct Answer Block, Sections 2-3 |

| how to rank in Siri | Platform-specific how-to | Section 4 |

| Alexa voice search optimization | Platform-specific how-to | Section 5 |

| voice search SEO | Broad informational | Sections 1, 2, 3 |

| AI assistant search optimization | Broad informational | Sections 1, 2, 6 |

| local voice search optimization | Local intent | Section 7 |

| speakable schema markup | Technical / how-to | Section 6 |

11. Tracking Your Voice Search Optimization Performance

Voice search visibility is harder to measure than standard SEO because Google Search Console doesn't flag "this click came from a voice result." Here is the practical tracking framework:

- Run weekly manual prompt audits. Open Siri, Google Assistant, Alexa, ChatGPT Voice, and Gemini Live. Ask 10-15 target queries you're optimizing for and note which sites get cited.

- Track featured snippet and AI Overview presence in Google Search Console. Featured snippet status is a strong proxy for voice search eligibility.

- Monitor Bing's index for your target pages. In Bing Webmaster Tools, check "URL Inspection." Bing indexing status is a direct proxy for Alexa and ChatGPT voice crawlability.

- Use brand monitoring tools for voice-adjacent signals. Tools like Mention or BrandMentions catch when your content gets referenced in AI-generated summaries posted publicly.

- Refresh voice-optimized pages quarterly. Pages last modified more than 6 months ago get deprioritized in voice results for time-sensitive queries.

For a full AI search metrics framework, see Fuel Online's guide to measuring AI search visibility.

12. Common Voice Search Optimization Mistakes in 2026

| Mistake | Why It Hurts | Fix |

|---|---|---|

| Blocking AI bots in robots.txt | Siri, ChatGPT Voice, and Alexa can't crawl your content | Explicitly allow OAI-SearchBot, ClaudeBot, PerplexityBot, Google-Extended, Bingbot |

| Answers buried in long paragraphs | Assistants extract the first sentence that satisfies the query | Lead every section and FAQ answer with the direct answer in sentence one |

| No FAQPage or Speakable schema | Assistants guess which part of your page to read | Implement FAQPage on all Q&A sections, Speakable with matching CSS classes |

| Optimizing only for Google | Alexa and ChatGPT Voice use Bing's index | Submit sitemap to Bing Webmaster Tools, implement IndexNow |

| Ignoring local citation consistency | Inconsistent NAP breaks trust signals for local voice queries | Audit NAP across GBP, Bing Places, Apple Maps, and 50+ directories |

| Writing for readers, not listeners | Long sentences sound awkward when spoken aloud | Keep answer sentences under 30 words; read content aloud to verify |

| No content freshness updates | Voice assistants deprioritize stale content | Update and re-ping each voice-optimized page at least quarterly |

Article Summary

- Voice search optimization in 2026 requires content structured for AI extraction. Siri, Alexa, and Google Assistant all follow the same core pattern: query intent parsing, index retrieval, passage extraction, and spoken delivery.

- The six core voice ranking signals are conversational query match, answer brevity (60-90 words max), E-E-A-T authority, schema markup (FAQPage + Speakable), page speed, and local signals.

- Apple Intelligence's Siri favors entity-marked content, Apple Maps presence, and natural spoken-English phrasing.

- Alexa+ uses Bing's index for web queries — Bing Webmaster Tools and IndexNow compliance are mandatory for Alexa voice visibility.

- FAQPage and Speakable schema are the two most impactful technical changes for voice search optimization in 2026.

- Local voice queries require a fully optimized Google Business Profile, consistent NAP citations, and LocalBusiness schema.

- Your robots.txt must explicitly allow OAI-SearchBot, ClaudeBot, PerplexityBot, Google-Extended, and Bingbot.

- IndexNow protocol is essential for Bing and Alexa crawlability. Implement via Rank Math or Yoast SEO 19.0+.

- Track voice search performance through weekly manual prompt audits, GSC featured snippet monitoring, and Bing Webmaster Tools URL inspection.

- Refresh all voice-optimized pages quarterly and update the dateModified schema property each time.

Frequently Asked Questions

What is voice search optimization in 2026 and how is it different from traditional SEO?

Voice search optimization in 2026 is the practice of structuring web content so that AI-powered voice assistants — Siri, Alexa, Google Assistant, ChatGPT Voice, and Gemini Live — can extract and speak your answers aloud. Unlike traditional SEO, which optimizes for a ranked list of blue links, voice search optimization targets a single spoken answer. There is no position two in a voice response. Content must be written in conversational language, kept under 90 words per answer, and marked up with FAQPage and Speakable schema to maximize extraction probability.

How do I optimize my website for Siri voice search?

To optimize for Siri voice search:

(1) ensure your content appears in Google's index since Apple Intelligence Siri uses web results as a source

(2) establish your brand as a named entity with Organization schema and consistent mentions across trusted sources

(3) claim and fully populate your Apple Maps listing for local queries

(4) write answer-first content with sentences under 30 words

(5) implement Speakable schema pointing to CSS-classed content sections

Does voice search optimization require different keywords?

Yes. Voice queries average 9-11 words versus 3-4 for typed searches. People say "what's the best way to optimize my content for voice search" rather than typing "voice search optimization tips." Your FAQ section headers and H2 subheadings should mirror full-sentence, conversational question format. Use tools like AnswerThePublic or Semrush's question keyword filters to surface exact phrasing your audience speaks.

How does Alexa choose what to say in response to a search query?

Amazon's Alexa+ uses Bing's web index as its primary source for general knowledge queries. For local queries, it uses Bing Maps and Bing Places for Business data. To appear in Alexa's spoken responses, submit your sitemap to Bing Webmaster Tools, implement IndexNow, have a complete Bing Places listing for local queries, and structure your content with direct answers in the first sentence of each relevant section.

What schema markup is most important for voice search?

The two most impactful schema types for voice search optimization are FAQPage and Speakable. FAQPage schema maps your questions and answers into a machine-readable format that assistants can extract directly. Speakable schema uses CSS selectors to pre-mark specific content sections as approved for voice delivery. LocalBusiness schema is critical for any business targeting local voice queries. All three should be implemented via Rank Math or Yoast SEO and verified through Google's Rich Results Test.